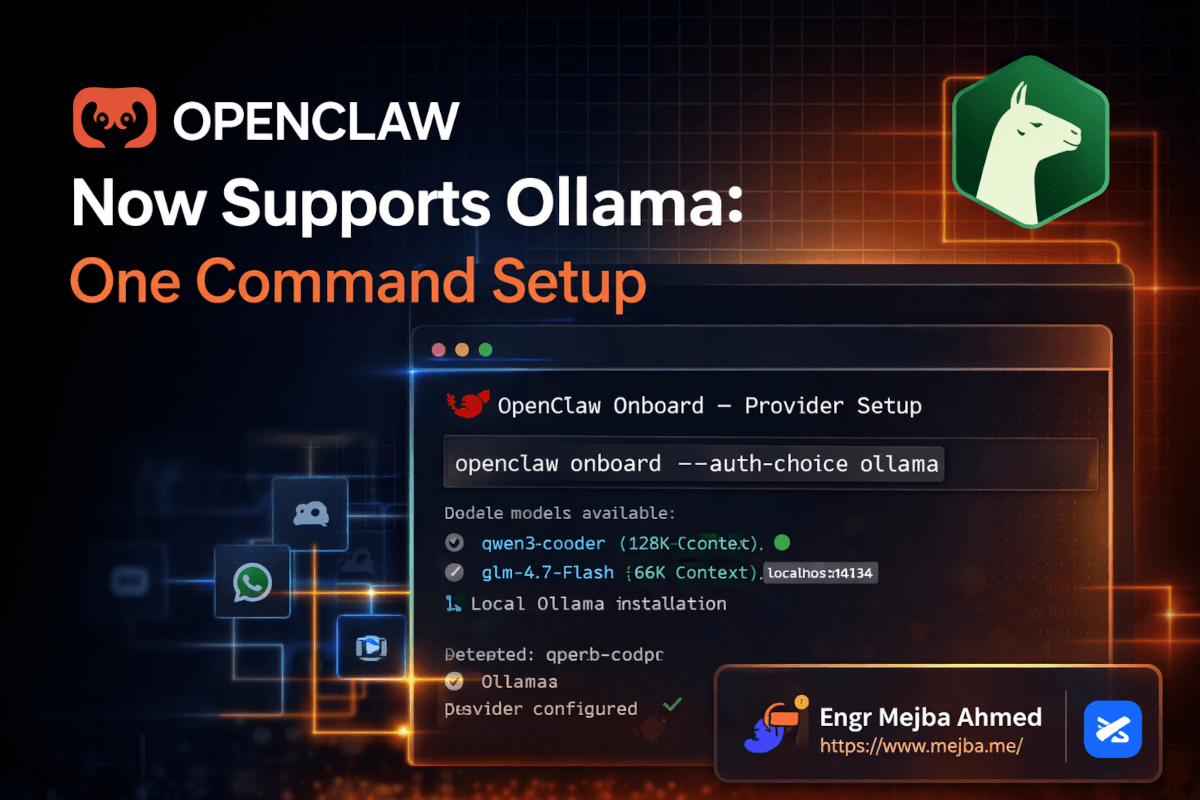

OpenClaw Now Supports Ollama: One Command Setup

I was halfway through configuring yet another cloud API key — my fourth this month — when a notification popped up in the OpenClaw Discord. Ollama was now an official provider. Not a community hack. Not a workaround involving three config files and a prayer. An official, first-class integration backed by one single onboarding command.

I dropped what I was doing and ran it immediately.

openclaw onboard --auth-choice ollama

That was it. My local Ollama models — the ones I'd been running for code generation, writing assistance, and data analysis — were suddenly available through every chat app I use. WhatsApp. Telegram. Discord. No API keys. No cloud bills. No latency spikes at 2 PM when half the planet decides to hit the same inference endpoint.

What happened next over the following week fundamentally changed how I think about personal AI assistants. And honestly, it made me wonder why I'd been paying for cloud inference this whole time.

Why This Announcement Matters More Than You Think

Here's some context that makes this Ollama integration significant beyond the obvious "cool, another provider" reaction.

OpenClaw — the open-source personal AI assistant created by Peter Steinberger (@steipete) — has exploded in popularity since late January 2026. The project hit 247,000 GitHub stars by early March. It's not just a chatbot sitting in your terminal. OpenClaw is a full agentic system that runs locally on your machine and connects to your messaging apps. It reads emails, manages calendars, checks you into flights, browses the web, writes and executes code — all triggered from a simple chat message.

The catch, until now, was the AI backbone. You needed a cloud LLM provider. Claude, GPT, DeepSeek — all solid options, but all requiring API keys, usage tracking, and monthly bills that scale with how much you actually use your assistant. For developers like me who use AI agents dozens of times per day, those costs add up fast.

Ollama changes that equation completely. Run your models locally, pay nothing for inference, and keep every conversation on your own hardware. The privacy implications alone are worth paying attention to — but the cost savings and zero-latency local execution? That's what got me excited enough to test this the same hour it dropped.

But before I walk you through the setup and what I learned running this for a week, there's a piece of this story most people are missing — and it has to do with which models actually work well as an OpenClaw brain.

The Origin Story You Should Know

If you've been following the OpenClaw saga, you know the name has been on quite a journey. Peter Steinberger originally launched it as Clawdbot in November 2025. The project went viral in late January 2026 — partly because the concept of running a personal AI agent from WhatsApp was genuinely novel, and partly because @steipete has a knack for building things that developers immediately want to use.

Then came the trademark situation with Anthropic, a quick rename to Moltbot (which, to be fair, never quite rolled off the tongue), and finally the landing on OpenClaw. The lobster branding stuck through all of it. If you've seen the iconic lobster emoji everywhere in AI developer circles lately, now you know where it comes from.

The name "OpenClaw" and the molting metaphor actually captures what makes this project special. Lobsters shed their shells to grow. OpenClaw keeps shedding limitations — first the closed-source model, then the single-provider dependency, and now the requirement for cloud APIs entirely.

I want to specifically call out @steipete here. Not just for building OpenClaw in the first place, but for the way the project has been shepherded through its explosive growth phase. The Ollama integration didn't happen in isolation — it was reviewed, tested, and refined with real community input. And honestly, having someone with Steinberger's engineering background (he built PSPDFKit, one of the most respected PDF SDKs in mobile development) leading the architecture decisions gives me confidence that this isn't just a hack job bolted onto the side. The integration is solid because the review process was rigorous.

Special thanks to @steipete for helping with and reviewing the Ollama provider integration. The open-source AI community is better when experienced engineers invest their time in getting the foundations right.

So what does the actual setup look like? It's almost anticlimactically simple.

How to Set Up OpenClaw With Ollama in Under Five Minutes

I'm going to walk through exactly what I did, including the small gotchas I hit, so you can avoid them.

Step 1: Make Sure Ollama Is Running

This sounds obvious, but I've seen people trip on it. You need Ollama installed and running with at least one model pulled.

# Check Ollama is running

ollama list

# If you don't have a model yet, grab one

# OpenClaw recommends models with 64K+ context windows

ollama pull qwen3-coder

The context window requirement is the thing most guides skip over. OpenClaw needs at least 64,000 tokens of context to reliably handle multi-step agentic tasks — the kind where it's reading an email thread, drafting a response, checking your calendar for conflicts, and then sending the reply. Short-context models will work for simple queries but choke on anything requiring sustained reasoning across multiple tool calls.

My recommended models for OpenClaw as of March 2026:

| Model | Context | Best For |

|---|---|---|

| qwen3-coder | 128K | Code generation, technical tasks |

| glm-4.7 | 128K | General-purpose assistant work |

| glm-4.7-flash | 64K | Faster responses, lighter tasks |

| gpt-oss:20b | 128K | Good balance of speed and capability |

| kimi-k2.5 | 128K | Strong reasoning, multi-step tasks |

I've been running qwen3-coder for most tasks and switching to glm-4.7-flash when I just need quick answers. The beauty of local models is switching costs nothing — no different API key, no different billing tier. Just change the model name.

Step 2: Run the Onboarding Command

Here's where the magic happens:

openclaw onboard --auth-choice ollama

The wizard does several things automatically:

- Detects your Ollama installation — it checks the default address (usually

http://localhost:11434) and confirms the connection - Lists your available models — every model you've pulled shows up for selection

- Sets your default model — if you only have one model, it auto-selects. Otherwise, you pick.

- Configures the provider — writes the necessary config so OpenClaw knows to route all inference requests to your local Ollama instance

- Installs the gateway daemon — the background process that bridges your chat apps to the OpenClaw agent

The whole process took me about 90 seconds. No JSON files to hand-edit. No environment variables to export. No Docker containers to orchestrate. Just answer the prompts and you're done.

🦞 OpenClaw Onboard — Provider Setup

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Detected Ollama at localhost:11434 ✓

Available models:

→ qwen3-coder (128K context) ✓ recommended

→ glm-4.7-flash (64K context) ✓ compatible

→ llama3.3 (8K context) ⚠ low context

Selected: qwen3-coder

Gateway daemon installed ✓

Provider configured ✓

Your lobster is ready. 🦞

That last line made me smile. The OpenClaw team's personality comes through even in CLI output.

Step 3: Connect Your Chat Apps

This is the part where OpenClaw becomes genuinely different from running Ollama alone. Ollama by itself is a local inference server — powerful, but you interact with it through a terminal or an API call. OpenClaw turns it into an assistant that lives in the apps you already have open all day.

# Connect WhatsApp (uses QR code pairing)

openclaw connect whatsapp

# Connect Telegram

openclaw connect telegram --token YOUR_BOT_TOKEN

# Connect Discord

openclaw connect discord --token YOUR_BOT_TOKEN

I connected WhatsApp first because that's where I spend most of my messaging time. The QR code pairing took about 10 seconds. After that, I could message my OpenClaw bot from WhatsApp and get responses powered entirely by my local qwen3-coder model.

No data leaving my machine. No API costs. The response was coming from the GPU sitting three feet from me.

Step 4: Test It With a Real Task

Don't just say "hello" and call it done. Give it something that exercises the agentic capabilities:

Me: Check my calendar for tomorrow and draft a summary

of what I have scheduled. If there are any conflicts,

flag them.

OpenClaw 🦞: Looking at your calendar for March 17...

You have 4 events tomorrow:

- 9:00 AM - Standup (30 min)

- 10:30 AM - Client call with Ramlit team (1 hr)

- 11:00 AM - Code review session (1 hr) ⚠️ CONFLICT

- 2:00 PM - Deploy planning (45 min)

⚠️ Conflict detected: "Client call" (10:30-11:30)

overlaps with "Code review" (11:00-12:00) by 30 minutes.

Suggestion: Move code review to 1:00 PM? That gives you

a 1-hour buffer before deploy planning. Want me to

reschedule it?

That entire interaction — calendar access, conflict detection, suggestion generation — ran on my local Ollama model. The response came back in about 4 seconds. With a cloud API, I'd be looking at network latency on top of inference time, plus paying for the privilege.

Here's the thing that surprised me most: the quality difference between running qwen3-coder locally through OpenClaw versus using a cloud model was smaller than I expected. For routine assistant tasks — email drafting, calendar management, quick code questions, file organization — the local model handled it beautifully. I only noticed the gap when doing complex multi-step reasoning chains that required holding large amounts of context simultaneously.

If you'd rather have someone build this kind of personal AI assistant setup from scratch tailored to your workflow, I take on custom AI automation engagements. You can see what I've built at fiverr.com/s/EgxYmWD.

What Happens When All Your Ollama Models Work With OpenClaw?

This is the part I didn't fully appreciate until I'd been running the setup for a few days. When Ollama is your OpenClaw provider, you don't just get access to one model. You get access to every model you've pulled. And you can switch between them on the fly.

Me: /model glm-4.7-flash

OpenClaw 🦞: Switched to glm-4.7-flash ✓

Me: Quick — what's the CORS header syntax for allowing

specific origins?

OpenClaw 🦞: Access-Control-Allow-Origin: https://yourdomain.com

For multiple origins, you'll need server-side logic —

the header only accepts one origin or *. In Express:

app.use(cors({

origin: ['https://a.com', 'https://b.com']

}));

The response from glm-4.7-flash came back in under a second. For quick factual lookups and syntax reminders, the smaller model is perfect. I don't need a 120-billion-parameter model to remind me of a CORS header.

But for deep tasks — debugging a complex race condition, analyzing a codebase, or drafting a detailed technical document — I switch to qwen3-coder and let it take its time. The ability to match model capability to task complexity is something you just can't do as fluidly with cloud providers. With Ollama, the cost of switching is literally zero.

The Model Routing Pattern I Settled On

After a week of experimentation, here's how I've been using different models:

Quick questions and lookups — glm-4.7-flash. Fast, cheap (free), accurate for factual content. I use this for probably 60% of my daily interactions.

Code generation and review — qwen3-coder. Better at maintaining context across long code files, understands framework-specific patterns, and generates more production-ready code.

Long-form writing and analysis — glm-4.7 or kimi-k2.5. When I need the model to hold a lot of context and produce nuanced output, these are my go-to choices.

Experimental and weird requests — whatever new model I've pulled that week. One of the joys of Ollama is trying new models as they drop. Last week I tested gpt-oss:20b for the first time and was impressed by its reasoning on math-heavy tasks.

The point is: OpenClaw doesn't care which model you're running. The integration treats Ollama as a unified provider. Your chat apps don't know or care whether the response came from a 7-billion or 120-billion parameter model. That abstraction layer is clean, and it's one of the reasons this integration feels like a first-class feature rather than a bolted-on afterthought.

Running OpenClaw Locally: What Nobody Warns You About

I'd be doing you a disservice if I made this sound like pure magic with no trade-offs. I hit real limitations during my week of testing, and you should know about them before you go all-in on local inference.

GPU Memory Is Your Bottleneck

The bigger models — gpt-oss:120b, kimi-k2.5, anything over 30 billion parameters — need serious VRAM. I'm running a machine with a capable GPU, and I still had to be thoughtful about which model I kept loaded. Ollama can offload to CPU, but the speed difference is brutal. A query that takes 3 seconds on GPU takes 40 seconds on CPU for a large model.

My advice: Start with a model that fits comfortably in your GPU memory. qwen3-coder at its default quantization runs beautifully on 16GB VRAM. Don't reach for the biggest model because it sounds impressive — reach for the one that responds fast enough to not break your flow.

Context Window Isn't Just a Number

OpenClaw's documentation says 64K minimum context, and they mean it. I tried running a 32K-context model and hit issues on the third multi-step task. The agent lost track of the conversation history mid-task and started repeating actions it had already completed. Bumping to a 64K+ model fixed it instantly.

This makes sense when you think about what OpenClaw is doing. It's not just having a conversation — it's maintaining tool call history, function results, system prompts, and your entire message thread. That overhead eats context fast. A model with 8K context might answer a single question fine, but it falls apart the moment OpenClaw needs to chain three or four actions together.

First Response Is Slow (Then It's Fast)

Ollama loads models into memory on first use. If the model isn't loaded when you send your first message of the day, expect a 10-30 second delay while it loads. After that, responses are fast — often faster than cloud APIs because you've eliminated network round-trips entirely.

I solved the cold-start problem by adding a simple cron job that sends a dummy request to Ollama every morning at 8 AM:

# Add to crontab: keep model warm

0 8 * * * curl -s http://localhost:11434/api/generate \

-d '{"model":"qwen3-coder","prompt":"ping","stream":false}' \

> /dev/null 2>&1

Small hack, big quality-of-life improvement.

Not Every Task Belongs on a Local Model

I'll be honest about this: for complex, multi-step agentic workflows that require strong reasoning over very long contexts — think "analyze this entire codebase and generate a migration plan" — cloud models like Claude still have an edge. The gap is closing every month as open-source models improve, but it exists today.

My approach is pragmatic. I use local Ollama models through OpenClaw for 80% of my daily tasks — the routine stuff that happens dozens of times per day. For the remaining 20% that demands frontier-level reasoning, I keep a cloud provider configured as a fallback. OpenClaw supports multiple providers simultaneously, so switching is seamless.

The cost savings from moving 80% of queries to local inference have been significant. My cloud API bill dropped from around $45/month to under $10.

Beyond Chat: The Tasks That Surprised Me

I expected OpenClaw + Ollama to handle chat well. What I didn't expect was how capable it would be at tasks I'd never bothered trying with a local model before.

Email Triage From WhatsApp

Every morning, I send one message to my OpenClaw bot:

Me: Morning brief — check email, flag anything urgent,

summarize the rest

Two minutes later (the email scanning takes a moment), I get a prioritized summary. Urgent items at the top with recommended actions. Non-urgent grouped by category. Newsletter spam identified and ready for archive. All processed by qwen3-coder sitting on my desk.

The fact that this happens from WhatsApp — the same app I'm checking anyway — means I actually use it every day. When it required opening a separate terminal and running a Python script, I'd skip it half the time.

Code Review Requests Via Telegram

I connected OpenClaw to the Telegram group I use for side project collaboration. Now I can paste a code snippet or a PR link and get instant feedback:

Me: Review this function — anything wrong?

[pastes function]

OpenClaw 🦞: Two issues:

1. Line 12: You're not awaiting the async call inside

the loop. This will fire all requests simultaneously

instead of sequentially. If the API has rate limits,

you'll hit them.

2. Line 23: The error catch swallows the exception

silently. At minimum, log it. Better: propagate it

with context about which iteration failed.

Want me to rewrite it with both fixes?

That review came from a local model. No code left my machine. For someone working on client projects with NDAs, the privacy angle isn't a nice-to-have — it's a requirement.

File Management and Organization

This one caught me off guard. I asked OpenClaw to help organize a messy downloads folder:

Me: Organize my ~/Downloads folder — group files by type,

move anything older than 30 days to archive, and list

what you did

OpenClaw 🦞: Done. Here's what I moved:

📁 Documents (14 files) → ~/Downloads/docs/

📁 Images (43 files) → ~/Downloads/images/

📁 Code (8 files) → ~/Downloads/code/

📁 Archives (6 files) → ~/Downloads/archives/

📁 Misc (3 files) → ~/Downloads/other/

Archived (>30 days): 23 files → ~/Archive/2026-02/

Total: 97 files organized. 23 archived.

Running this through a cloud API would mean uploading my file listing to someone else's server. With local Ollama, the directory never leaves my machine.

How Does OpenClaw + Ollama Compare to Cloud-Only Setups?

I ran both setups side by side for five days. Here's what I found — no fabricated benchmarks, just my honest experience across real daily usage.

Response Speed for Simple Tasks: Local Ollama was consistently faster. No network latency means sub-second responses for quick queries. Cloud providers typically added 1-3 seconds of overhead even for simple responses.

Response Quality for Complex Reasoning: Cloud models (particularly Claude) still handled multi-step reasoning more reliably. When I asked OpenClaw to plan a complex deployment workflow involving eight sequential steps with conditional branching, the cloud model nailed it. The local model got about 80% right and needed one correction.

Cost Over Five Days: Cloud-only setup cost me approximately $8 in API calls. The Ollama setup cost $0. My electricity bill didn't noticeably change — the GPU was already running for other tasks.

Privacy: No contest. Local wins absolutely. Every query, every file reference, every email snippet stayed on my hardware.

Reliability: Cloud depends on internet connectivity and provider uptime. During a brief internet outage on day three, my local OpenClaw setup kept working without missing a beat. The cloud setup was completely dead.

The hybrid approach — Ollama for daily tasks, cloud for complex reasoning — gave me the best of both worlds. And OpenClaw makes switching between them trivially easy.

Setting Up the Hybrid Approach: Local + Cloud Fallback

If the hybrid model sounds appealing, here's how I configured it:

# Primary: Ollama (local, free, private)

openclaw onboard --auth-choice ollama

# Secondary: Cloud provider as fallback

openclaw provider add --name claude --type anthropic \

--api-key $ANTHROPIC_API_KEY

# Set routing rules

openclaw config set routing.default ollama

openclaw config set routing.complex claude

With this configuration, OpenClaw routes most requests to Ollama by default. When I explicitly ask for the cloud model (using /model claude in chat) or when a task exceeds a complexity threshold, it routes to the cloud provider.

The setup gives me a monthly AI bill under $10 while still having access to frontier models when I genuinely need them. Before this, I was spending $40-50/month. Over a year, that's nearly $500 saved — and my day-to-day assistant experience is actually better because local inference is faster for routine tasks.

What This Means for the Future of Personal AI

I want to zoom out for a moment because this Ollama integration represents something bigger than one feature announcement.

When OpenClaw launched, the assumption was that personal AI assistants needed cloud LLMs. The local model landscape in late 2025 just wasn't good enough for reliable agentic work. Models couldn't hold enough context. They weren't fast enough. They made too many mistakes on multi-step tasks.

That changed rapidly. By early 2026, models like qwen3-coder and glm-4.7 became capable enough to handle real assistant workloads. Ollama made running them locally dead simple. And now OpenClaw has made connecting them to your daily workflow a single command.

The trajectory is clear: personal AI is going local-first. Cloud becomes the exception for frontier-level tasks, not the default for everything. And the OpenClaw + Ollama stack is the most accessible way to make that shift right now.

@steipete made a decision early on to keep OpenClaw model-agnostic. No lock-in to one provider. No preferential treatment for cloud over local. That architectural decision is paying dividends now. When Ollama became viable as a serious provider, the integration was clean because the abstraction layer was already there. Good engineering decisions compound over time — and this is a textbook example.

The One-Hour Challenge: Try This Today

I don't want this to be another article you read, nod along to, and then forget. So here's a concrete challenge.

Set a one-hour timer. In that hour:

- Install Ollama if you haven't already (5 minutes)

- Pull qwen3-coder —

ollama pull qwen3-coder(depends on your internet speed, but the download runs in the background) - Install OpenClaw from the GitHub repo (10 minutes including build)

- Run the onboard command —

openclaw onboard --auth-choice ollama(2 minutes) - Connect one chat app — whichever you use most (5 minutes)

- Give it three real tasks — not "hello," not toy examples. Ask it to do something you'd normally open a browser or terminal for.

If your experience is anything like mine, you'll be hooked by task two. There's something genuinely delightful about sending a WhatsApp message and getting back a useful response powered entirely by hardware you own.

The lobster has arrived on your local machine. And it's ready to work.

FAQ

Frequently Asked Questions

Everything you need to know about this topic

Yes — every model available in your local Ollama installation works with OpenClaw after running openclaw onboard --auth-choice ollama. The onboarding wizard detects all pulled models automatically. For reliable multi-step agent tasks, choose models with at least 64K context windows. For the full model recommendation list, see the setup section above.

Plan for 8-16GB of VRAM minimum. Models like qwen3-coder run comfortably on 16GB VRAM at default quantization. Smaller models like glm-4.7-flash work on 8GB. You can offload to CPU, but response times increase significantly — from 3 seconds to 30+ seconds for larger models.

Absolutely. OpenClaw supports multiple providers simultaneously. Configure Ollama as your default for daily tasks and add a cloud provider (Claude, GPT, DeepSeek) as a fallback for complex reasoning. See the hybrid setup walkthrough above for the exact commands.

Completely. With Ollama as your provider, all inference runs on your local machine. No queries, file contents, or conversation data leave your hardware. This makes the setup ideal for developers working under NDAs or handling sensitive client data.

OpenClaw connects to WhatsApp, Telegram, Discord, Signal, Slack, and iMessage. Each app has its own connect command — openclaw connect [app] — and setup typically takes under five minutes per platform.

Let's Work Together

Looking to build AI systems, automate workflows, or scale your tech infrastructure? I'd love to help.

- Fiverr (custom builds & integrations): fiverr.com/s/EgxYmWD

- Portfolio: mejba.me

- Ramlit Limited (enterprise solutions): ramlit.com

- ColorPark (design & branding): colorpark.io

- xCyberSecurity (security services): xcybersecurity.io