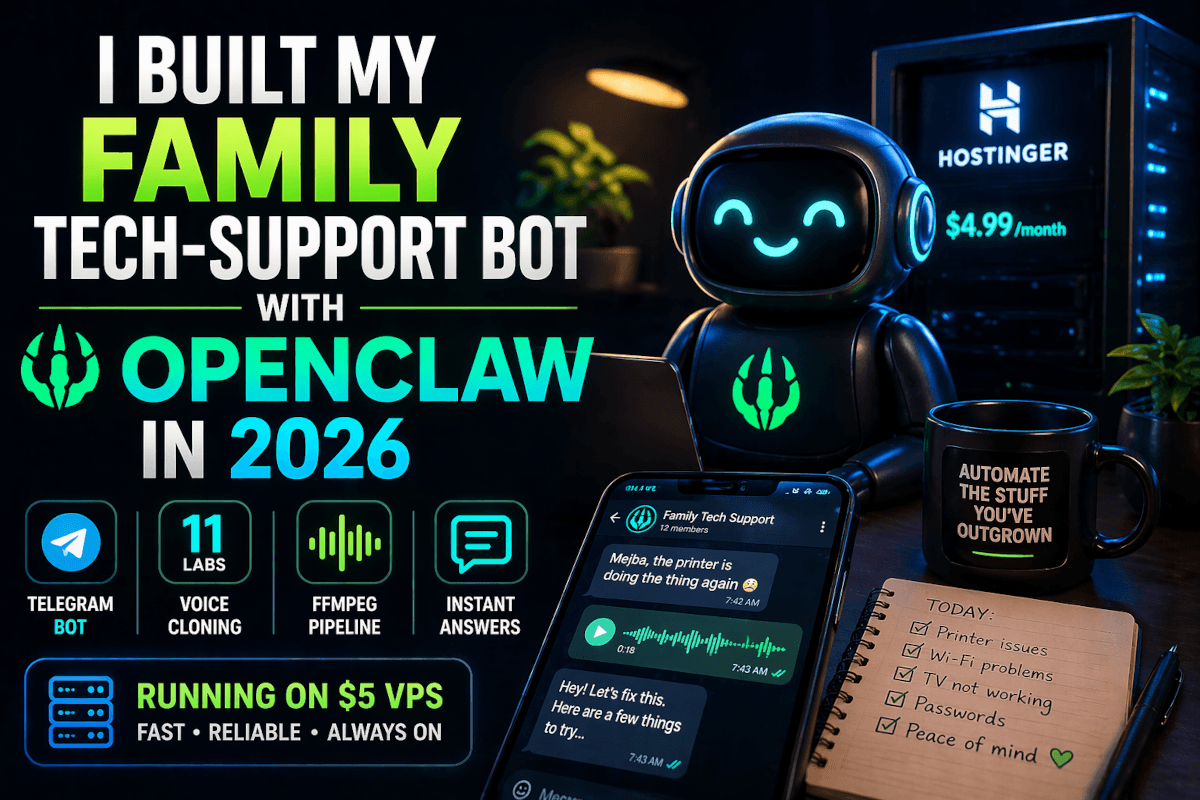

OpenClaw: Autonomous AI Agent With Hidden Risks

200,000 GitHub stars in a few months. A plugin marketplace with over 10,000 community skills. An architecture sophisticated enough that serious engineers are calling it a genuine breakthrough in autonomous AI design.

And 30,000 live instances exposed on the public internet — many with default ports and plaintext credentials — right now.

That tension is OpenClaw in a sentence. And it's exactly why I spent the last week pulling apart its architecture, reading the security disclosures, and working out what "safe usage" actually looks like versus what people are doing in practice.

Here's my honest take as someone who builds AI systems professionally: OpenClaw's design is genuinely impressive. The decisions the team made around session isolation, memory architecture, and skill execution borrow patterns from operating systems and databases — not from the typical AI startup playbook of "make it work first, worry about the rest later." These are good ideas implemented thoughtfully.

The security situation is a separate story. In January, a critical vulnerability let any malicious webpage silently hijack your authentication token by exploiting a missing websocket origin check. A coordinated campaign seeded the plugin marketplace with malware targeting cryptographic keys and agent credentials. Meta banned the tool internally.

These aren't edge case problems. They're the gap between "architecturally elegant" and "ready for unsupervised deployment on your personal machine."

Understanding both sides — the sophistication and the exposure — is what this post is about. If you're an AI developer or a security-aware builder evaluating autonomous agents, you need the full picture. Not just the demo. Not just the CVE list. Both.

What OpenClaw Is — And Why It's Different From Every AI Tool You've Used

Every AI tool you've probably used operates reactively. You open ChatGPT, type a question, get a response. You open Copilot, write some code, get suggestions. The AI sits dormant until you explicitly invoke it.

OpenClaw doesn't wait.

It's a self-hosted autonomous agent that runs continuously on your device — laptop, VPS, Mac Mini, whatever you deploy it on — and acts proactively based on triggers. An email arrives matching a certain pattern: OpenClaw wakes up, processes it, drafts a response, schedules a follow-up. A calendar event is forty-eight hours away: the agent starts gathering relevant context and preparing briefing notes. A file in a monitored folder changes: a workflow fires automatically.

It integrates with WhatsApp, Slack, Telegram, Discord, Signal, iMessage, your email clients, your calendar, and your file system. Not through clunky APIs that require you to configure webhooks manually — through a local gateway server that acts as a unified message broker across all of them.

That "always on" architecture is what makes OpenClaw categorically different from the tools most developers are familiar with. The comparison isn't to ChatGPT or Copilot. It's closer to having a junior employee who has access to all your communication channels and can act on your behalf — without asking permission each time.

That description should immediately trigger both excitement and concern. Both are the correct response.

The Architecture: Why Engineers Are Taking This Seriously

Before the security problems, the design deserves its own discussion. Because the team made choices that most AI projects don't make — choices that show genuine systems thinking.

OpenClaw runs on a four-layer architecture.

The Gateway is a local websocket server that acts as message broker and orchestrator. Every connected platform — every messaging app, every email client, every integration — routes through the gateway. It handles authentication, routing, and coordination between the other layers. Think of it as the kernel interrupt handler for the agent's communication stack.

The Reasoning Layer hosts the LLM. But it's not a simple pass-through. The reasoning layer merges incoming messages with system context, manages token budgets across the conversation history, and handles model selection based on task complexity. Light tasks get routed to faster, cheaper models. Heavy reasoning tasks get escalated. This isn't just a cost optimization — it's an architectural decision that keeps the system responsive without burning through inference budget on every low-stakes query.

The Memory System stores state in plain markdown files on disk. Session logs, user preferences, semantic memories, task context — all of it sits in readable files rather than a database. The compaction mechanism borrows from write-ahead logging in databases and virtual memory paging in operating systems: as the context grows, older entries get compressed and archived while recent context stays active. It's a genuinely smart solution to the problem of maintaining agent continuity across long-running deployments.

The decision to use markdown files instead of a database is worth dwelling on. It keeps the memory system human-readable and auditable — you can open a file and see exactly what the agent knows, modify entries manually, or delete context you don't want retained. A traditional database would be more performant at scale, but the transparency trade-off is a reasonable one for a local agent where trust and inspectability matter more than query speed. This pattern also means memory backups are trivially simple: copy the files. Recovery is equally simple: restore the files. No database migrations, no schema versioning headaches.

Skills and Execution is where the agent actually does things. Executes commands, runs scripts, controls the browser, calls external APIs. Skills are defined in markdown files — readable, auditable, modifiable. The Claw Hub marketplace lists over 10,000 community-contributed skills covering everything from email triage to code review automation.

Session isolation is handled through Docker containers. Each communication channel gets its own container, preventing context from leaking across conversations and mimicking the process isolation model of operating systems. If one channel session gets compromised, it doesn't automatically compromise the others.

These are mature design patterns applied thoughtfully to an AI agent context. The memory system in particular is worth studying even if you never deploy OpenClaw — it's a practical solution to a problem that every long-running AI agent needs to solve: how do you maintain continuity without unbounded context growth?

But the architecture also reveals exactly where the attack surface sits. And the attack surface is large.

The January Vulnerability: How a Web Page Could Own Your Agent

In January, a critical security flaw was disclosed that illustrated the risk perfectly.

The Gateway server — the hub that everything routes through — did not validate websocket origin headers. This sounds technical and abstract. The practical consequence was this: any malicious web page could include hidden JavaScript that opened a websocket connection to your local Gateway and hijacked your authentication tokens.

No password required. No additional authentication. Visit the wrong page and an attacker gains full remote control over your OpenClaw instance.

The attack doesn't require the victim to do anything other than browse a website while their OpenClaw instance is running. The malicious JavaScript runs silently in the browser, the missing validation lets the connection through, and the attacker now has the ability to issue commands to your agent — commands that execute with your permissions across every integrated platform.

An agent connected to your email, your Slack, your WhatsApp, your file system, acting on commands from an attacker. The blast radius is significant.

This vulnerability has been patched. But its existence reveals something important about the gap between architectural ambition and security implementation. The session isolation between containers was thoughtfully designed. The single point of failure at the gateway — the most exposed component in the entire system — was not.

Additional vulnerabilities disclosed since then include server-side request forgery, path traversal attacks, and authentication bypasses. These aren't obscure edge cases — they're the fundamental security categories that any network-accessible service needs to address before deployment.

The patch velocity has improved. The project is actively maintained. But the history matters when you're evaluating whether to deploy something that has access to your communications and files.

The Plugin Marketplace: 20% of 10,000 Skills Were Malicious

This is the finding that genuinely alarmed me.

Security audits of Claw Hub — the community plugin marketplace — identified approximately 800 malicious plugins out of 10,000+ available. That's a roughly 20% malware rate, concentrated primarily around a coordinated campaign with a specific targeting strategy.

The malicious plugins weren't random garbage or obvious fakes. They were functional, useful-looking skills that also extracted three specific files:

openclaw.json— the gateway authentication tokensdevice.json— cryptographic keys used for secure pairingsoulm— the agent's personality and behavior definitions

Why those three? Because with openclaw.json and device.json, an attacker has authenticated access to your agent instance. With soulm, they understand how your agent behaves and can craft instructions that align with its existing behavior patterns rather than triggering unexpected responses.

The sophistication of the targeting — knowing exactly which files contain the credentials and configuration that matter — points to actors who studied the system architecture specifically to extract maximum value from a compromise.

The 10,000-skill scale makes manual vetting impossible at the user level. Browsing Claw Hub and downloading skills that seem useful is exactly the behavior the campaign was designed to exploit. Most users don't read skill source code before installing. Most users trust that a marketplace with thousands of contributions has some form of quality control.

The comparison to npm is worth making explicitly. The npm ecosystem has had repeated supply chain attacks — malicious packages that look legitimate, get installed by developers who trust the registry, and exfiltrate credentials or install backdoors. The AI skill marketplace problem is structurally identical but with a meaningfully higher blast radius: a malicious npm package typically has access to your development environment. A malicious OpenClaw skill has access to your communications, your calendar, your files, and the ability to act as you across every platform the agent is connected to.

The software security community spent years developing practices around npm — audit tools, lock files, dependency pinning, registry scanning. The AI agent skill marketplace problem is at roughly the same maturity level npm security was at in 2016. The attacks are ahead of the defenses, and the consequences of a successful attack are more severe than most developers currently appreciate.

OpenClaw has since introduced a built-in security scanner — OpenClaw Doctor — that detects risky policies, misconfigurations, and missing authentication in installed skills. This is a meaningful improvement. But the 20% baseline malware rate across the historical catalog means any skill installed before recent security improvements should be audited retroactively, not assumed safe.

The lesson for the broader AI agent ecosystem — not just OpenClaw — is that plugin marketplaces for autonomous agents require a fundamentally different security posture than plugin marketplaces for traditional software. A malicious VS Code extension has access to your editor. A malicious OpenClaw skill has access to everything the agent is connected to, running with your identity.

Running OpenClaw Without Getting Burned: The Actual Setup

If you're going to experiment with this — and it's worth experimenting with, the architecture is genuinely interesting — here's how to do it without exposing yourself to the obvious failure modes.

Never run it on your personal machine. This is the foundation. Your personal laptop has credentials, keys, sensitive files, and direct access to your actual communication accounts. Running an agent with a documented history of credential-targeting malware on that machine is not a reasonable risk. Use a dedicated VPS or an isolated machine.

Two-layer container isolation is the minimum. One container for the gateway, separate sandbox containers for skill execution. The skill execution containers should have no network access by default (skills that legitimately need network access should require explicit permission), read-only file systems where possible, and memory limits that prevent resource exhaustion attacks. The gateway container and the skill execution containers should not share a network namespace.

Use Podman instead of Docker. Podman runs rootless — there's no root daemon process that owns the container runtime. If a container escape occurs (a documented attack class against containerized applications), the blast radius is limited to the user-level permissions of the process that was running Podman, not root. For security-sensitive deployments, rootless container execution is a meaningful defense in depth.

Bind the gateway to localhost only. The gateway runs on port 18789 by default. That port should never be directly exposed to the internet. If you need remote access to your agent, put a reverse proxy in front of it with TLS termination and authentication — not basic auth, something with proper credential management. Many of the 30,000 exposed instances are exposed because the gateway was bound to 0.0.0.0 rather than 127.0.0.1 — the simplest possible misconfiguration.

Read skill source code before installing. Every skill is a markdown file with embedded code. They're human-readable. Before installing any skill from Claw Hub, read it — specifically look for external network calls, file access patterns, and any logic that reads from the three sensitive files I described earlier. Use OpenClaw Doctor as a first pass, but don't treat a clean scan as a guarantee. The scanner catches known-bad patterns; a sophisticated attacker will eventually probe for patterns the scanner misses.

Keep the skill surface area minimal. The more skills you install, the larger the attack surface. Start with the core skills you actually need, verify them carefully, and resist the pull of the marketplace's 10,000-option catalog. You don't need most of it.

Rotate credentials after any unvetted skill installation. If you installed skills before the marketplace audit was completed and you haven't rotated your openclaw.json tokens and device.json keys since, do that now. Assume any skill installed from Claw Hub before the recent security improvements was potentially malicious. The cost of rotating credentials is low. The cost of running on compromised credentials indefinitely is not.

Audit the agent's action logs regularly. One of the advantages of the markdown-based memory system is that every session's actions are logged in readable files. Set aside time each week to review what the agent actually did — what commands it ran, what files it accessed, what messages it sent or drafted. Anomalies in those logs are the earliest indicator that something is behaving outside your intended parameters, whether due to a compromised skill or a misunderstood instruction.

The Honest Assessment: Who Should Actually Be Running This

OpenClaw is the right tool for a narrow set of use cases right now.

Developers who want to study autonomous agent architecture in a controlled environment — specifically the memory system and session isolation design — will get genuine value from running a hardened local instance. The system design is worth understanding regardless of whether you ever deploy it in production.

Security researchers have obvious reasons to be interested. The attack surface is rich and the disclosures are well-documented. Understanding how these systems fail is directly applicable to any project involving autonomous agents.

Founders and builders evaluating whether autonomous AI agents belong in their product or infrastructure should run a sandboxed instance and stress-test it against the threat model that matches their use case. Better to discover the failure modes in a controlled experiment than in production.

Who shouldn't be running OpenClaw right now: anyone who wants to connect it to production systems, real communication accounts, or files that matter, and who isn't willing to invest in the containerization and security review the deployment requires. The gap between "install and connect to my Gmail" and "install with proper isolation and vetted skills" is significant, and most people installing it are not bridging that gap.

Meta's internal ban isn't arbitrary caution. It reflects a security team looking at the historical vulnerability record and the plugin marketplace malware rate and concluding that the risk isn't justified for organizational use. That's a reasonable conclusion given the current state of the project.

None of this means OpenClaw is a bad project or that autonomous agents are inherently too risky to use. It means this specific project, at this specific point in its development, requires security-conscious deployment that most casual installers aren't providing.

What OpenClaw Tells Us About Where AI Agents Are Going

The interesting question isn't whether OpenClaw's current security posture is problematic — it clearly is. The interesting question is what the existence of OpenClaw, its 200,000 stars, and its architectural sophistication tells us about where the agent ecosystem is heading.

Autonomous AI agents with persistent state, proactive triggering, and deep integration into communication and file systems are coming regardless of whether any specific project gets the security right on the first attempt. The demand is clear. The architecture is proven. The missing piece is the security framework that makes these systems trustworthy enough for broad deployment.

What OpenClaw's plugin marketplace problem illustrates is that the current trust model — community-contributed skills, user discretion — doesn't scale to the threat model of an agent that has access to everything. The security architecture needs to be designed around the assumption that skills will be malicious, not the assumption that they won't. Permission scoping, sandboxed execution, cryptographic signing of skill packages, and mandatory code review before marketplace listing are the direction.

The websocket origin vulnerability illustrates a different class of problem: the assumption that a locally-running service is inherently protected because it's "local." Local services that authenticate via token and accept connections without origin validation are not local-only. They're accessible to any code running in the browser of the machine they're running on. For AI agents specifically — which are often running on the same machine as a browser — this is a critical design assumption to get right.

The teams that build autonomous AI agents with production-quality security will have genuine advantages over those that build first and patch later. OpenClaw's architecture is worth studying. Its deployment practice, as currently widespread, is worth avoiding.

That's the real lesson from 30,000 exposed instances and 800 malicious plugins.

🤝 Let's Work Together

Looking to build AI systems, automate workflows, or scale your tech infrastructure? I'd love to help.

- 🔗 Fiverr (custom builds & integrations): fiverr.com/s/EgxYmWD

- 🌐 Portfolio: mejba.me

- 🏢 Ramlit Limited (enterprise solutions): ramlit.com

- 🎨 ColorPark (design & branding): colorpark.io

- 🛡 xCyberSecurity (security services): xcybersecurity.io