Opus 4.6 Now Has 1M Token Context: My Real Tests

I was 47 messages deep into a Claude Code session last Tuesday — refactoring a sprawling Laravel monolith into domain services — when the model started hallucinating function names that didn't exist. Not random names. Names that almost matched real functions from files I'd fed it twenty minutes earlier. The context had rotted. The model was drowning in its own memory, confusing file A with file B, inventing plausible-sounding methods that lived nowhere in my codebase.

I cleared the context, re-fed the critical files, and started over. Again. For the third time that day.

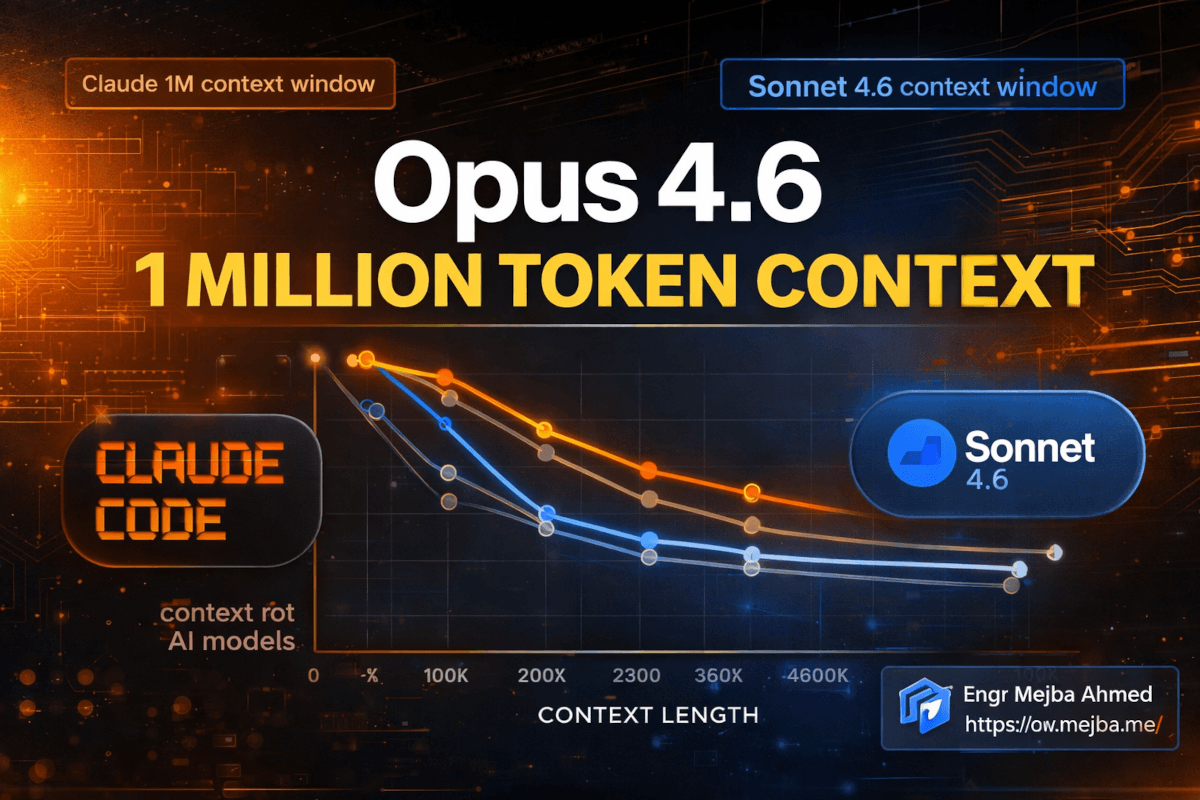

If you've used any large language model for serious coding work, you know this exact pain. You hit a wall somewhere around 100K-120K tokens where the model stops being a collaborator and starts being a liability. Every Claude Code power user I know has developed the same muscle memory: watch the token count, clear early, re-prime often. It works. But it's exhausting. And it means you can never truly hand the model a massive codebase and say "understand all of this."

That changed on March 13th, 2026. Anthropic quietly rolled out 1 million token context windows for both Opus 4.6 and Sonnet 4.6 — a 5x jump from the previous 200K ceiling. And after spending three days pushing this to its limits, I can tell you: this isn't an incremental upgrade. This is the single biggest practical improvement to Claude since I started using it daily.

But the raw number isn't even the interesting part. What's interesting is how little the model degrades across that massive context. And that's where the story gets worth telling.

What Is Context Rot — And Why 1M Tokens Alone Doesn't Fix It

Here's the dirty secret most AI companies don't want to talk about openly: a bigger context window means nothing if the model can't actually use the information sitting at the far edges of it.

This problem has a name. Context rot. It's the phenomenon where model performance degrades — sometimes catastrophically — as input context grows beyond a certain threshold. Think of it like reading a 500-page novel in one sitting versus a 50-page novella. By page 400, your recall of a specific detail from page 12 is... fuzzy at best.

Previous models hit this hard. Feed Opus 4.5 more than about 100K tokens, and its ability to recall specific details scattered throughout the input dropped off a cliff. The model technically accepted 128K tokens. But accepting tokens and actually reasoning over them are very different things.

Google made the same bet with Gemini — massive context windows that sounded great in marketing materials but performed inconsistently when you actually needed the model to find a specific configuration buried deep in a large input. I tested Gemini 3.1 Pro on exactly this kind of task. The results were not confidence-inspiring.

So when Anthropic announced 1M tokens, my first question wasn't "how big?" It was "how much does it forget?"

The answer surprised me. And it's backed by a specific benchmark that I think every developer should understand.

The Eight Needle Test: Why This Benchmark Matters

Anthropic uses a test called the "eight needle" evaluation. The concept is straightforward but brutal: scatter eight specific, distinct pieces of information across a massive input context. Then ask the model to recall all eight.

It's like hiding eight specific sentences inside a 3,000-page document and asking someone to find every single one without missing any. Not approximately. Not "I found six out of eight." All eight, with accurate details.

This test matters because it measures something most benchmarks ignore — the ability to maintain granular recall across the entire context window, not just the beginning and end. Models that score well on eight needle are models you can actually trust with large codebases, long document analysis, and multi-file refactoring sessions.

Here are the numbers. Look at these carefully:

| Model | Max Context Window | Eight Needle Score | Key Takeaway |

|---|---|---|---|

| Opus 4.5 | ~128,000 | 27.1 | Performance cliff beyond ~100K |

| Gemini 3.1 Pro | ~200,000 | 26.0 | Similar degradation pattern |

| Sonnet 4.5 | ~200,000 | 18.5 | Worst recall among peers |

| Opus 4.6 | 1,000,000 | 78.3 | 5x context, 3x effectiveness |

| GPT 5.4 | Not specified | ~78.0 | Competitive with Opus 4.6 |

Read that again. Opus 4.5 scored 27.1 at roughly 128K tokens. Opus 4.6 scored 78.3 at one million tokens. That's not just a larger window — it's nearly triple the recall effectiveness at almost eight times the context length. The model isn't just accepting more tokens. It's actually reasoning over them in a way the previous generation couldn't touch.

And yes — GPT 5.4 hits roughly the same eight needle score. Credit where it's due. But GPT 5.4 hasn't published a clear maximum context window, and in my testing, its practical performance on very long coding sessions doesn't quite match the synthetic benchmark numbers. More on that when I get to the real-world results.

The Gemini 3.1 Pro numbers are worth noting too. Google's model scored 26.0 — essentially tied with the previous generation Opus 4.5, despite Google marketing Gemini's context window as a key differentiator. Big windows, mediocre recall. That's the pattern Anthropic just broke.

Here's the practical translation: across 1 million tokens, Opus 4.6 shows only about a 14% drop in effectiveness compared to its performance at 256 tokens. Think about that. You can feed it nearly a thousand pages of code, documentation, and conversation history, and it retains 86% of its short-context capability. That's not perfect. But it's usable in a way that no previous model has been.

The 2% Rule: A Practical Heuristic for Token Management

After running my own tests alongside the published benchmarks, I've landed on a rough heuristic that's been accurate enough to plan around: expect approximately a 2% drop in effectiveness for every additional 100K tokens of context.

At 100K tokens: ~2% degradation. Barely noticeable. At 200K tokens: ~4% degradation. Still extremely solid. At 500K tokens: ~10% degradation. You'll occasionally notice slightly less precise recall. At 1M tokens: ~14% degradation. Working harder, but still functional.

This is a guideline, not a law. The actual degradation depends on what's in your context — homogeneous code in one language degrades differently than a mix of documentation, code, configs, and conversation history. But as a planning tool, the 2% rule has held up well in my three days of testing.

What this means practically: the old advice of "clear your context at 100K-120K tokens" is no longer a hard rule. You can push well beyond that now. Should you push all the way to 1M every time? Probably not — and I'll explain why in the implementation section. But the operational ceiling has moved dramatically upward.

The previous best practice was rooted in real pain. Beyond 120K tokens on Opus 4.5, you'd start seeing the model confuse similar variable names, merge details from different files, or "forget" constraints you'd set early in the conversation. Those problems don't vanish at 1M tokens — but they've been pushed so far out that most real-world sessions will never hit them.

That shift changes how I structure my entire workflow. And it probably should change yours too.

How I Actually Use This in Claude Code Daily

Theory is nice. What does 1M tokens feel like when you're shipping code?

I spend anywhere from four to ten hours a day inside Claude Code. It's my primary development environment — not an assistant I check in with occasionally. I use it for everything from writing new features to debugging production issues to refactoring entire module structures. Before the 1M context update, my workflow looked like this:

- Start a session with system prompt and key files (~15K tokens)

- Work through tasks, feeding files and receiving outputs

- Watch the token counter nervously

- Around 100K-120K tokens, notice the first signs of drift — repeated suggestions, slightly off variable names, forgotten constraints

- Clear context, re-feed critical files, lose the conversational thread

- Repeat steps 2-5 two or three times per major task

Now? I start a session and I just... work. For hours. Without the constant mental overhead of context management. The cognitive load reduction is hard to overstate. It's like the difference between driving with a fuel gauge that's always near empty versus having a full tank. You stop worrying about the resource and start focusing on the road.

Here's a specific example from this week. I was migrating a multi-tenant SaaS application from a shared-database architecture to a database-per-tenant model. This involved touching 23 files: models, migrations, middleware, config files, test suites, and deployment scripts. On the old context window, I'd have needed at least three separate sessions, each time re-establishing which files had been modified and what the overall migration strategy was.

With the 1M context window, I loaded all 23 files upfront (~85K tokens), plus the existing migration documentation (~12K tokens), plus my architectural notes (~8K tokens). That's roughly 105K tokens just for the initial context — already past the old "danger zone." Then I worked through the migration file by file, with the model maintaining perfect awareness of every change we'd made across the entire session. The session ran to approximately 340K tokens before I finished.

Not once did I need to clear. Not once did the model confuse a tenant-scoped query with a global one. Not once did I have to say "remember, we already changed the middleware in step 4."

That session would have taken me an entire day with the old context limits, between the re-priming and the re-explaining and the fixing of mistakes caused by context loss. It took four hours.

A Note About Claude Code's Autocompact Buffer

One thing that tripped me up initially: Claude Code still uses an autocompact buffer of 33K tokens. This is the rolling window of recent conversation that the model keeps in active working memory, separate from the broader context.

The 1M context window doesn't change this buffer size. What it changes is the total context the model can reference — your files, your system prompt, the full conversation history, and the autocompact buffer combined. The buffer is still 33K tokens of "hot" memory, but now the "warm" memory extends to 1M tokens instead of 200K.

In practice, this means the model is still strongest on your most recent exchanges (the buffer) but can now reach back much further into the conversation history and loaded files without losing the thread. The combination works well. I haven't felt the need for a larger buffer — the 33K active window handles the immediate back-and-forth, and the expanded context handles everything else.

What About the Cost? Anthropic Made a Smart Move

Here's something that almost got buried in the announcement but matters enormously for anyone running serious Claude Code sessions: Anthropic removed the cost multiplier for context beyond 200K tokens.

Previously, using context beyond the standard window came with a pricing penalty. The exact multiplier varied, but it meant that a 400K token session cost meaningfully more per token than a 100K token session. This created a perverse incentive — you were financially punished for using the model's full capability.

Now? Flat pricing. Whether your session uses 9K tokens or 900K tokens, the per-token cost is the same. You're paying for what you consume, not paying a premium for consuming a lot of it.

This is available on Claude Code's Max plan, Teams, and Enterprise tiers. If you're on any of those plans — and if you're reading this blog, you probably should be — the 1M context window is already active. No feature flag. No waitlist. It's just there.

The pricing change matters because it removes the last practical barrier to actually using the expanded context. Before, I'd sometimes clear context early not because the model was degrading, but because I was watching my API bill climb. That calculation is gone. I can now make context management decisions based purely on quality, not cost.

If you'd rather have someone set up and optimize Claude Code workflows for your team's specific needs, I take on exactly those kinds of engagements. You can see what I've built at fiverr.com/s/EgxYmWD.

My Recommended Context Strategy for March 2026

Alright, so you have 1M tokens available. Should you use all of them, all the time? No. Here's the strategy I've settled on after three days of deliberate testing.

Step 1: Front-Load Your Critical Context

At the start of every session, load the files and documentation that matter most. Don't drip-feed them — give the model the full picture upfront. This takes advantage of the model's strongest recall zone (the beginning of context) for your most important information.

For a typical coding session, my initial load looks like:

- System prompt and project conventions (~5K tokens)

- Architecture documentation (~8-15K tokens)

- All files I expect to modify (~40-100K tokens)

- Related test files (~20-40K tokens)

- Recent git diff for context on what's changed (~5-10K tokens)

That's anywhere from 80K to 170K tokens before the first message. On the old model, this would have left me almost no room to work. Now it's less than 20% of my available context.

Step 2: Set Your Personal Degradation Threshold

Based on the 2% per 100K heuristic, decide how much degradation you're comfortable with:

-

Conservative (minimize degradation): Clear or compact around 200K tokens. You'll experience ~4% degradation — essentially imperceptible in daily work. This is what I'd recommend for production-critical refactoring where precision is non-negotiable.

-

Balanced (my default): Work up to 400K-500K tokens before considering a clear. At ~10% degradation, the model is still highly capable, and you avoid the productivity loss of re-priming. This is where I operate for most coding sessions.

-

Extended (maximum continuity): Push toward 700K-1M tokens for sessions where maintaining the full conversational thread matters more than peak recall — like exploratory architecture discussions or long debugging sessions where every prior attempt is relevant context.

Step 3: Watch for Specific Degradation Signals

Even with the improved context handling, degradation does eventually show up. Here's what to watch for:

- Variable name confusion: The model starts mixing up similarly-named variables from different files. This is usually the first sign.

- Constraint drift: Instructions from early in the session start getting partially ignored. You notice the model not following a formatting rule or skipping a step you'd specified.

- Confident fabrication: The model states something about your code with confidence, but it's subtly wrong — a function signature with the wrong parameter order, or a method that exists on a different class than stated.

- Repetitive suggestions: You ask for a new approach and get back something very similar to what it already tried. The model is losing track of what's been attempted.

When you spot two or more of these in close succession, that's your signal. Don't wait for it to get worse. Clear context, re-prime with your critical files, and continue.

Step 4: Use Intentional Bookmarks

This is a technique I've developed specifically for long sessions. Every 150K-200K tokens, I drop a "bookmark" message:

Quick checkpoint: we've completed [X, Y, Z]. Current state:

- File A: modified (added tenant scoping)

- File B: not yet touched

- File C: needs migration update

Next: work on File B's query layer.

This serves two purposes. First, it forces me to organize my own thinking about where the session stands. Second, it gives the model a clean, recent summary of the project state that falls within its autocompact buffer. Even if recall of early-session details has degraded slightly, the bookmark provides a fresh anchor point.

I've found this single technique is worth more than any amount of token counting. A well-placed bookmark at 300K tokens keeps the model sharper than no bookmark at 200K tokens.

Why I Think This Matters More Than Loops or Beat

I want to put this in perspective. Over the past six months, Anthropic has shipped a lot of features for Claude Code. Loops (the ability for the model to iteratively run and test code). Beat (the ability to handle background tasks). Extended thinking improvements. Tool use refinements. All good stuff. All things I use daily.

But the 1M context window is different in kind, not just degree. Here's why.

Every other feature improves what the model can do within a single interaction. Loops make it better at iterating. Beat makes it better at multitasking. Thinking makes it better at reasoning. These are all about capability at a point in time.

The context window expansion improves what the model can know during a session. It's about memory, not skill. And memory turns out to be the bottleneck that was quietly limiting everything else.

A model with perfect coding ability but amnesia after 100K tokens is a model that can only work on small problems — or work on big problems in small, disconnected chunks. A model with the same coding ability and 1M tokens of reliable memory can tackle projects that were previously out of reach for AI-assisted development.

I'm talking about full-application refactors. Multi-service architecture changes. Codebase-wide pattern migrations. Security audits that need to cross-reference every authentication endpoint with every authorization check. These are the tasks where human developers spend weeks and still miss things. They're also the tasks where an AI with sufficient context could find patterns and inconsistencies that no human would catch.

We're not fully there yet. The 14% degradation at 1M tokens means you still need to be thoughtful about how you use the context. But we're close enough that I've started tackling tasks with Claude Code that I would have considered impossible three months ago.

The competitive landscape makes this even more interesting. GPT 5.4 is neck-and-neck on the eight needle benchmark at ~78 versus Opus 4.6's 78.3 — statistically insignificant difference. But Anthropic's flat pricing model and the Claude Code integration give it a practical edge for developers who live in the terminal. I've used both extensively. On raw recall, they're peers. On workflow integration for coding tasks, Claude Code's implementation is smoother.

Gemini 3.1 Pro, despite Google's massive investment in long-context research, is a full generation behind on recall quality. A score of 26.0 on the eight needle test — nearly identical to the previous generation Opus 4.5 — suggests that Google solved the context window size problem without solving the context quality problem. Big window, leaky memory. That's not a combination I'd trust with a 20-file refactoring session.

The Honest Limitations — What This Doesn't Solve

I'd be lying if I told you the 1M context window is all upside. There are real trade-offs and limitations you should know about before changing your workflow.

Latency increases with context size. More tokens means more to process on each turn. I've noticed response times roughly doubling between 100K and 500K tokens of context. At 800K+, there's a perceptible delay before the model starts generating. It's not terrible — we're talking seconds, not minutes — but if you're used to near-instant responses on short contexts, the lag is noticeable.

Not all degradation is equal. The 14% average degradation masks significant variance depending on what you're asking the model to recall. Specific numeric values (like port numbers or version strings) buried deep in context degrade faster than structural patterns (like "this module handles authentication"). If your work depends on precise detail recall from early context, the effective degradation for your use case might be higher than 14%.

The autocompact buffer is still 33K. This means the model's active working memory hasn't changed. If you're doing rapid back-and-forth on a specific problem, the 33K buffer is your real constraint, not the 1M context window. The expanded context helps with "cold" recall — reaching back to something from earlier in the session — but doesn't make the model better at juggling multiple active threads in the immediate conversation.

You can still outrun it. I managed to get the model genuinely confused during a session where I was simultaneously modifying interdependent files across three microservices. At around 600K tokens, it started suggesting changes to Service A that conflicted with changes we'd already made to Service B twenty minutes prior. The bookmark technique helped, but it didn't eliminate the issue entirely.

These aren't dealbreakers. They're the kind of limitations you learn to work around once you understand them. But I'd rather you hear them from me than discover them during a critical deploy.

What This Means for the Next Six Months

I've been building with AI coding tools since GPT-3.5 made them viable. Through that entire arc, one pattern has been consistent: the biggest leaps forward always come from expanding what the model can hold in context, not from making it marginally smarter at any single task.

The jump from 4K to 32K tokens made AI-assisted coding possible. The jump from 32K to 128K made it practical for real projects. The jump from 200K to 1M makes it viable for entire codebases.

We're approaching a threshold where a model can hold your entire application — every file, every test, every config — in a single context window. For a typical mid-size application (200-500 files), we're already there. For large enterprise codebases, we're maybe one more generation away.

When that happens, the workflow shift is fundamental. You stop thinking about "which files does the AI need to see?" and start thinking about "what should I ask the AI to do across my entire codebase?" That's a qualitatively different kind of development assistance. It's the difference between an AI that helps you edit a file and an AI that understands your system.

I think we'll look back at March 2026 as the month that transition started in earnest. Not because 1M tokens is the final number — it's not. But because it's the first time the context was large enough and the recall was reliable enough to make whole-codebase AI assistance actually work.

For the first time in my experience, the context window isn't the bottleneck. And that means the bottleneck is now... us. Our ability to ask the right questions, structure the right prompts, and design workflows that take advantage of what's suddenly possible.

I'll take that trade-off. Every time.

FAQ

Frequently Asked Questions

Everything you need to know about this topic

No setup required. The 1M context window is automatically available on Claude Code's Max plan, Teams, and Enterprise tiers as of March 2026. If you're on one of those plans, it's already active.

For precision-critical work like production refactoring, clear around 200K tokens. For exploratory sessions or long debugging, push to 400K-500K comfortably. The 2% degradation per 100K tokens heuristic helps you decide. For a deeper breakdown, see My Recommended Context Strategy above.

No. Anthropic removed the cost multiplier for context beyond 200K tokens. Pricing is flat whether your session uses 9K or 900K tokens. See What About the Cost above for details.

Both models score approximately 78 on the eight needle benchmark — statistically tied on raw recall. Opus 4.6 has a slight edge in Claude Code workflow integration and flat pricing. See the benchmark comparison table in The Eight Needle Test section.

Claude Code's autocompact buffer remains at 33K tokens — this is the "hot" working memory for immediate back-and-forth. The 1M expansion increases total referenceable context, not the active buffer. See A Note About Claude Code's Autocompact Buffer for how the two interact.

Let's Work Together

Looking to build AI systems, automate workflows, or scale your tech infrastructure? I'd love to help.

- Fiverr (custom builds & integrations): fiverr.com/s/EgxYmWD

- Portfolio: mejba.me

- Ramlit Limited (enterprise solutions): ramlit.com

- ColorPark (design & branding): colorpark.io

- xCyberSecurity (security services): xcybersecurity.io