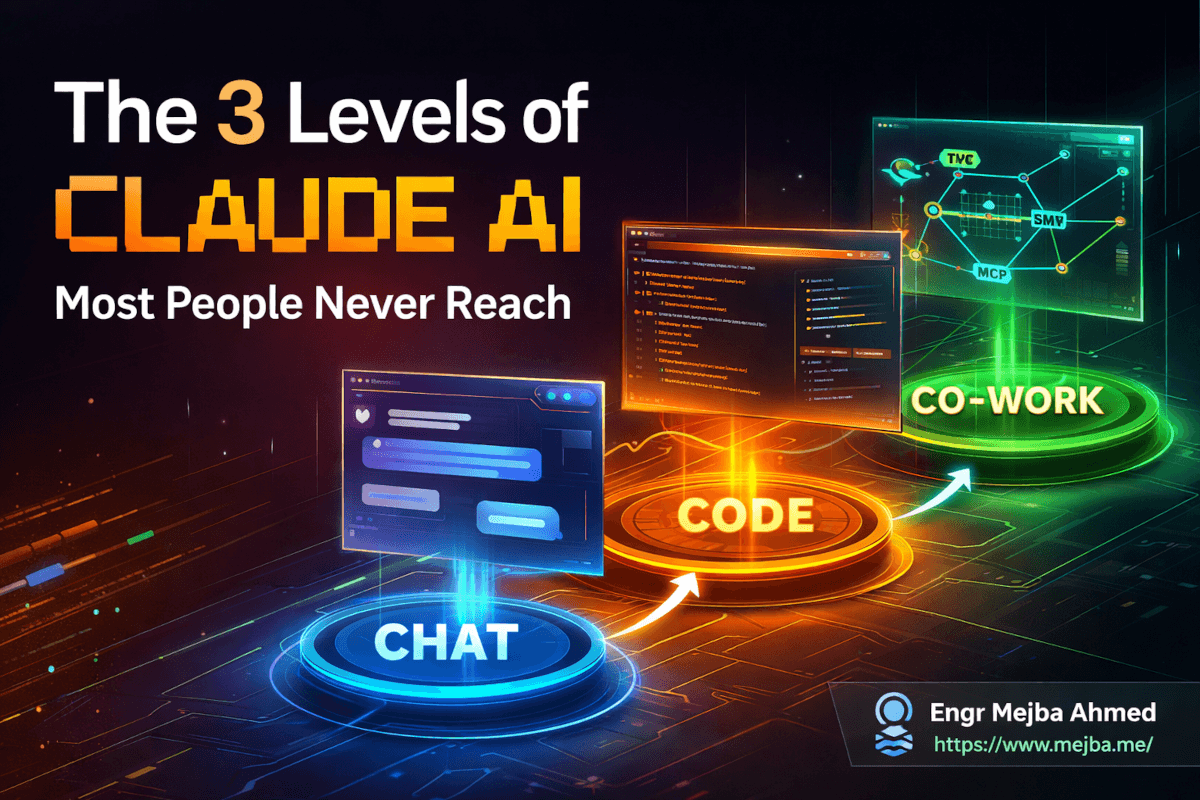

The 3 Levels of Claude AI Most People Never Reach

Six months ago, I watched a developer friend spend forty-five minutes manually renaming and sorting 300 files into folders based on their content type. He had Claude open in a browser tab the entire time. Using it to ask questions. Copying and pasting answers back into his terminal one command at a time.

I walked over, opened Claude Code on his machine, connected an MCP server to his file system, and wrote a three-line instruction. The whole job finished in under two minutes. He stared at the screen like I'd performed a magic trick.

It wasn't magic. He was using Claude at Level 1. I was using it at Level 3. Same AI. Same underlying intelligence. Completely different universe of capability.

That gap -- between what most people do with Claude and what Claude can actually do -- is the single biggest waste of potential I see in the AI space right now. And I'm not talking about obscure, developer-only tricks. I'm talking about a clear progression that anyone can follow, from casual chat all the way to fully automated workflows that run while you sleep.

I've spent the past year working across every layer of Anthropic's ecosystem: building with the API, shipping projects through Claude Code, wiring up MCP servers for clients, and stress-testing every new feature the day it drops. What I've learned is that Claude isn't one tool. It's three tools stacked on top of each other, and most people only ever unwrap the first one.

Here's the framework I wish someone had given me when I started. We'll walk through all three levels, and by the end, you'll know exactly which level you're operating at -- and how to reach the next one.

What Changed About Claude's Model Lineup in 2026

Before we talk about how to use Claude, you need to understand what you're actually talking to. Because picking the wrong model for the wrong task is like using a sledgehammer to hang a picture frame. It'll work, technically. But you're wasting energy and probably damaging something in the process.

As of early 2026, Anthropic's lineup has three tiers, and each one exists for a specific reason.

Opus 4.6 sits at the top. This is the flagship -- the model I reach for when I need deep reasoning, multi-step problem solving, or anything where getting the nuance wrong has real consequences. Code architecture decisions. Security audit analysis. Long-form content that needs to hold together across thousands of words. Opus doesn't just process your prompt. It thinks about it. The tradeoff is speed and cost. You'll feel the latency, and if you're on the API, you'll feel it in your bill too.

Sonnet 4.6 is the workhorse. Faster than Opus, cheaper than Opus, and honestly good enough for 80% of what most people throw at it. I use Sonnet for day-to-day coding assistance, drafting, data analysis, and any task where I need quality but don't need the absolute ceiling of reasoning capability. If Opus is the senior architect, Sonnet is the strong mid-level engineer who ships clean code all day without burning out.

Haiku 4.5 is the lightweight option. Fast, cheap, surprisingly capable for its size. I use Haiku for classification tasks, quick summarization, and anywhere I'm making high-volume API calls where per-token cost matters. Don't sleep on Haiku -- for the right use cases, it's the smartest choice precisely because it doesn't overthink things.

The mistake I see constantly: people default to the most powerful model for everything. That's like driving a Ferrari to pick up groceries. Match the model to the task. Save Opus for the moments that actually demand it.

Now that you know what's under the hood, let's talk about the three levels of actually using these models -- because the model you choose matters far less than how you interact with it.

Level 1: Claude Chat -- Where Everyone Starts (and Most People Stop)

Level 1 is the browser. claude.ai. You type something, Claude responds, and you go back and forth. Simple. Familiar. And if this is all you're doing, you're accessing maybe 20% of what you're paying for.

I'm not saying Chat is basic. I'm saying most people use it in a basic way. The gap between a beginner's chat session and an expert's chat session is enormous -- and it has nothing to do with the model. It's all prompting technique and feature awareness.

The Prompt Engineering Framework That Actually Works

Most prompting advice online is either too vague ("be specific!") or too academic to be useful in practice. Here's the framework I use every single day, and it takes about ten seconds of extra thought per prompt.

Context + Task + Format. Three pieces. Every time.

Context is what Claude needs to know before it can help you. Your role, the situation, constraints, prior decisions. Don't assume Claude knows anything about your specific scenario -- it doesn't.

Task is the specific thing you want done. Not "help me with my code" but "refactor this authentication middleware to use a shared token validation function."

Format is how you want the output. A bullet list? A code block with comments? A table comparing options? Specify it, or Claude will guess -- and it might guess wrong.

Here's the difference in practice. Bad prompt: "Explain Docker networking." Decent prompt: "I'm a backend developer migrating a monolithic Node.js app to microservices using Docker Compose. Explain how Docker bridge networks handle inter-container communication, and show me a docker-compose.yml example where three services communicate on a custom network. Use comments in the YAML to explain each networking decision."

The second prompt gets you a response that's immediately useful. The first gets you a Wikipedia article. Same model, wildly different output -- and the only difference is thirty seconds of thinking about what you actually need.

Connectors Turn Claude Into a Hub

Here's a feature that flew under the radar for a lot of people: Connectors. Claude can now plug directly into services like Gmail, Google Drive, and other apps -- pulling real data into your conversations without copy-pasting.

I connected my Gmail, and now I can say things like "summarize the last five emails from my AWS billing alerts" or "draft a reply to the latest message from [client name] that addresses their timeline concerns." Claude reads the actual emails, understands the context, and responds with something I can send.

This sounds small. It isn't. The friction of switching tabs, copying text, pasting it into Claude, and then copying the response back -- that friction adds up across dozens of interactions per day. Connectors cut it to zero for supported services.

Projects Give Claude a Memory

One of the most underused features at Level 1 is Projects. A Project is a persistent workspace where you can upload documents, set custom instructions, and have conversations that share a common knowledge base.

I have a Project for every active client engagement. Each one contains their tech stack documentation, coding standards, and previous conversation context. When I start a new chat inside that Project, Claude already knows the codebase conventions, the deployment pipeline, the team's preferred patterns. I don't re-explain anything.

The power move here is combining Projects with detailed system instructions. I'll write something like: "You are assisting with the Ramlit client portal rebuild. The stack is Laravel 11, Inertia.js, React 18, and PostgreSQL 16. All API responses must follow our standard envelope format. Never suggest raw SQL -- always use Eloquent." Now every conversation in that Project follows those rules automatically.

If you're using Claude without Projects, you're re-teaching it your preferences in every single conversation. That's hours of wasted context-setting per week.

Extended Thinking: When You Need Claude to Actually Reason

Standard Claude responses happen fast. That speed is great for most things. But some problems need more than pattern matching -- they need genuine multi-step reasoning. Architecture decisions. Debugging complex race conditions. Evaluating tradeoffs between approaches that all seem reasonable on the surface.

Extended Thinking mode tells Claude to slow down and work through the problem step by step before giving you an answer. I turn it on for anything where I've been stuck for more than thirty minutes myself. The quality difference is tangible -- you'll get responses that consider edge cases, acknowledge tradeoffs, and arrive at conclusions through visible reasoning chains rather than confident-sounding guesses.

The catch: it's slower and uses more tokens. Don't leave it on for everything. Turn it on when the problem is hard. Turn it off when you just need a quick function signature or a regex pattern.

Artifacts: Claude Builds Things You Can See and Touch

This one changed how I prototype. Artifacts let Claude generate interactive outputs -- working UI components, mini-applications, data visualizations, formatted documents -- right inside the chat interface. Not just code. Running, clickable, testable things.

Last week I needed to pitch a dashboard layout to a client. Instead of spending two hours mocking it up in Figma, I described the layout to Claude, and it generated a fully interactive React component as an Artifact. Clickable tabs. Responsive grid. Placeholder data that matched the client's domain. I screen-recorded it and sent the video. The client approved it in an hour.

Artifacts are also incredible for content drafting, SVG generation, and quick data tools. I've built CSV parsers, color palette generators, and interview prep flashcard apps -- all as Artifacts, all in under five minutes each.

Skills: Teach Claude Your Playbook Once

Skills are predefined instruction sets you can save and reuse. Think of them as macros for Claude's behavior. Instead of writing the same detailed prompt every time you need a code review, a security audit, or a blog post outline, you define a Skill once and trigger it whenever you need it.

I have Skills for code review (with my specific criteria for naming conventions, error handling, and test coverage), for generating API documentation in my preferred format, and for drafting technical proposals that follow my client's template.

One security note worth mentioning: be thoughtful about what you put in Skills. They execute with the same permissions as your conversation, so a poorly written Skill that includes sensitive context or overly broad instructions could leak information you didn't intend to share. Keep Skills focused and audit them periodically.

The compound effect here is real. A good library of Skills turns Claude from a generic assistant into a customized tool that knows your standards, your preferences, and your workflow. Building that library is a one-time investment that pays off in every conversation afterward.

But here's the thing -- everything I've described so far happens inside a browser tab. You're still the middleman, copying outputs and pasting them into your actual tools. That's where Level 2 breaks the ceiling wide open.

Level 2: Claude Code -- Where AI Meets Your Actual Workflow

The day I installed Claude Code was the day my relationship with AI development fundamentally shifted. Chat is a conversation. Code is a collaborator that lives inside your development environment and acts on your codebase directly.

Claude Code runs in your terminal. It reads your files. It writes code. It runs commands. It understands your project structure not because you described it, but because it's looking at it. The mental model shift is significant: you stop telling Claude about your code and start letting Claude work with your code.

Getting Set Up: More Options Than You Think

Claude Code isn't limited to one interface. You can run it as a desktop application, straight from your terminal, as a VS Code extension, or even as a Chrome extension for web-based workflows. Each entry point has its strengths.

I spend most of my time in the terminal version. It's fast, it integrates with my existing shell workflow, and I can pipe outputs between Claude Code and other CLI tools. The VS Code extension is excellent when I want Claude to see my editor state -- which files are open, where my cursor is, what I've highlighted. The Chrome extension is useful for quick tasks when I'm browsing documentation and want to ask Claude something about what I'm reading without switching windows.

Pick the one that fits how you already work. The capability is identical across all of them.

The init Command: Your Codebase's Autobiography

Run claude code init (or the equivalent /init command inside a session) at the root of any project, and Claude Code will scan your entire codebase and generate a CLAUDE.md file -- essentially a machine-readable summary of your project's architecture, conventions, dependencies, and structure.

This file becomes Claude Code's long-term memory for your project. Every future session reads it. Every suggestion respects it. And you can edit it to add project-specific rules, preferred patterns, or things Claude should never do.

I add rules like "never modify files in the /migrations directory without explicit approval" and "all new API endpoints must include OpenAPI doc comments." Claude Code follows them religiously.

If you're using Claude Code without an init file, you're making it guess about your project. Stop guessing. Run init.

Commands, Hooks, and Custom Scripts

This is where Claude Code starts feeling less like an AI assistant and more like a programmable member of your team.

Commands are slash-command shortcuts you can define for frequently used operations. I have /review for code reviews, /test for running and analyzing test suites, and /deploy-check for pre-deployment validation. Each one triggers a specific Claude Code behavior I've configured.

Hooks are event-driven automations. You can set Claude Code to automatically run certain checks or actions when specific things happen -- like linting every file before Claude commits it, or running security scans on any new dependency Claude installs. Hooks are your safety net. They ensure Claude Code operates within your guardrails even when you're not watching every step.

Custom scripts take it further. You can write shell scripts or Python scripts that Claude Code invokes as part of its workflow. I have a script that runs my full test suite and feeds the results back to Claude Code so it can automatically fix failing tests. The loop -- write code, run tests, interpret failures, fix code, re-run tests -- happens without me touching the keyboard.

If you'd rather have someone build this kind of automated development setup from scratch, I take on exactly these engagements. You can see what I've built at fiverr.com/s/EgxYmWD.

Ultra Think Mode: When Standard Reasoning Isn't Enough

Extended Thinking was Level 1's power feature. Ultra Think is its bigger, more resource-hungry sibling, and it's available in Claude Code where complex, multi-file reasoning is common.

I activate Ultra Think when I'm dealing with problems that span multiple files and require understanding the relationships between them. Refactoring a shared service that's imported by fifteen different modules. Tracing a bug that manifests in the frontend but originates three layers deep in the backend. Designing a migration strategy that needs to account for data integrity across four database tables.

Ultra Think mode doesn't just give Claude more time. It gives Claude a larger scratchpad for working through complex reasoning chains. The results are noticeably more thorough -- Claude will catch implications and edge cases that standard mode misses.

Use it sparingly. It's the Opus of thinking modes -- powerful, slower, and worth every second when the problem justifies it.

Code Auditing and Developer Productivity

Here's a workflow I've built that saves me roughly five hours per week.

Every Monday morning, I point Claude Code at the previous week's pull requests and ask it to audit them. Not just for bugs -- for pattern consistency, security implications, performance concerns, and documentation gaps. Claude Code reads the diffs, cross-references them against our project conventions (from the CLAUDE.md file), and produces a structured audit report.

Before Claude Code, I did this manually. It took most of Monday morning and I inevitably missed things because human attention drifts after the third PR. Claude Code doesn't drift. It applies the same criteria to PR number one as it does to PR number twenty.

The productivity compound is what gets me. Claude Code doesn't just save time on the task you give it. It saves time on every downstream task that would have been affected by the bugs, inconsistencies, or documentation gaps it catches early. A bug caught in code review costs ten minutes to fix. The same bug caught in production costs a day.

Level 2 is transformative for individual developers. But there's still a ceiling: you're limited to what Claude can do inside your local environment, with the files and tools it can directly access. Level 3 removes that ceiling entirely.

Level 3: Claude Co-work and MCPs -- The Automation Layer

This is where most people's eyes glaze over, and it's exactly where the biggest leverage lives. Level 3 is about connecting Claude to everything -- not just your codebase, but your entire digital infrastructure. And the technology that makes it possible is the Model Context Protocol.

What Is MCP, and Why Should You Care?

MCP -- Model Context Protocol -- is an open standard that Anthropic created to solve a fundamental problem: AI models are smart, but they're trapped inside their conversation window. They can't reach out and interact with the world unless you give them a bridge.

MCP is that bridge. It's a client-server architecture where Claude (the client) connects to MCP servers that expose capabilities from external tools and services. A Gmail MCP server lets Claude read and send emails. A file system MCP server lets Claude organize your documents. A database MCP server lets Claude query and update your data. A GitHub MCP server lets Claude manage issues, review PRs, and trigger workflows.

The architecture is clean. Claude sends structured requests to the MCP server. The server translates those requests into API calls, file operations, or whatever the external service requires. Results flow back to Claude. From your perspective, you just talk to Claude and things happen in the real world.

I know that sounds abstract, so let me make it concrete.

A Real Example: Automated File Organization

A client came to me with a nightmare folder: 2,000+ files dumped into a single directory over three years. PDFs, images, spreadsheets, Word documents, code files -- all mixed together with inconsistent naming conventions. Some had dates in the filename, some didn't. Some were duplicates. A few were corrupted.

The manual approach would have taken days. With MCP, it took about twenty minutes to set up and ten minutes to run.

I connected Claude to a file system MCP server that could read file metadata, move files, create directories, and rename things. Then I gave Claude a simple set of instructions: "Scan every file in this directory. Classify each one by type and date. Create a folder structure organized by year, then by file type. Rename files using our convention: YYYY-MM-DD_descriptive-name.ext. Flag any duplicates or corrupted files in a separate report."

Claude processed all 2,000+ files. It created the folder structure, moved every file, renamed them consistently, identified 47 duplicates, and flagged 3 corrupted files. The client's reaction was the same as my developer friend's: stunned silence, then "how do I learn to do this?"

That's MCP. That's Level 3.

Building Custom MCP Servers

Here's where it gets really interesting. You can build your own MCP servers. Any service with an API can become one. Any internal tool your company uses can become accessible to Claude through a custom connector.

I've built MCP servers for clients that connect Claude to their internal CRM, their custom deployment pipeline, and their proprietary data analysis tools. The pattern is always the same: define the tools (what actions are available), implement the handlers (what happens when each tool is called), and register the server with Claude's MCP client configuration.

Anthropic publishes SDKs for Python and TypeScript, and the protocol spec is well-documented. Most MCP servers I've built took less than a day from concept to working prototype.

The implication is wild: Claude's capabilities aren't fixed. They're extensible. Every MCP server you connect makes Claude more capable -- not in terms of reasoning, but in terms of what it can actually do.

Automation Workflows That Run Without You

The real power of Level 3 isn't doing one thing faster. It's chaining actions together into workflows that handle entire processes.

Imagine this: you get a new client inquiry via email. Claude reads the email (Gmail MCP), extracts the project requirements, creates a new project card in your management tool (Notion MCP), drafts a proposal based on your template (file system MCP), schedules a follow-up reminder (Calendar MCP), and sends a personalized acknowledgment reply (Gmail MCP again). All from a single instruction: "Process the new client inquiry from [name]."

I'm not describing a hypothetical. I've built variations of this workflow for my own practice and for clients. The time savings compound dramatically when you consider that these workflows execute consistently every time. No steps forgotten. No follow-ups missed. No "I'll get to that later" that turns into "I completely forgot about that."

How Does This Compare to Just Using the API Directly?

This is a question I get a lot from developers, and it's worth addressing head-on.

Yes, you can build all of this using Anthropic's API directly. Custom integrations. Tool use. Multi-step workflows. The API gives you complete control.

But MCP offers something the raw API doesn't: a standardized, reusable, shareable protocol. When you build an MCP server for Slack, anyone else using Claude can connect to it. When someone in the community builds an MCP server for Jira, you can use it without writing integration code. The ecosystem effect matters.

Think of it like the difference between writing raw HTTP requests and using a REST framework. Both get the job done. One gives you an ecosystem, conventions, and composability that the other doesn't.

For one-off integrations with unique requirements, the API is the right choice. For building a connected system where Claude interacts with multiple services through a consistent interface, MCP wins -- and it wins bigger the more services you connect.

The Progression Path: How to Actually Level Up

I've thrown a lot at you. Here's how to absorb it without getting overwhelmed.

Week 1-2: Master Level 1. Start using the Context + Task + Format framework for every prompt. Set up one Project for your most active work area. Try Extended Thinking on a problem you've been stuck on. Build one Artifact -- a UI component, a data tool, anything interactive. Connect one Connector to a service you use daily.

Week 3-4: Build Your Skills Library. Create three to five Skills for your most repetitive tasks. Code review criteria. Documentation templates. Analysis frameworks. Each Skill you build is time you never spend re-writing that prompt again.

Week 5-6: Enter Level 2. Install Claude Code in your preferred environment. Run init on your main project. Build one custom Command for a task you do daily. Set up one Hook for automated quality checks. Experience the difference between describing your code and letting Claude read it directly.

Week 7-8: Explore Level 3. Install one community MCP server -- file system or GitHub are great starting points. Use it for a real task. Once you see the pattern, try connecting a second service. Then start thinking about which workflows in your daily routine could be automated by chaining MCP actions together.

Month 3 and beyond: Build custom MCPs. Identify internal tools or services that aren't covered by existing MCP servers. Build your own. This is where the leverage becomes exponential, because you're giving Claude access to capabilities nobody else has.

The progression is deliberate. Each level builds on the one before it. The prompting skills you develop in Level 1 make you more effective in Level 2. The automation thinking you develop in Level 2 prepares you to design workflows in Level 3. Skip a level and you'll hit a wall.

The Honest Tradeoffs Nobody Mentions

I've painted a compelling picture, so let me balance it with reality.

Level 1 is enough for most people. If you're using Claude for writing, research, analysis, or occasional coding help, a well-configured Project with good Skills and the right prompting framework will serve you beautifully. You don't need Claude Code. You don't need MCP. Sophistication for its own sake is a trap.

Level 2 requires trust calibration. Giving an AI agent write access to your codebase means you need guardrails. Hooks, review steps, version control discipline. I've seen developers let Claude Code run unsupervised and end up with changes they didn't understand in files they didn't expect. Use the power, but use it with checks in place. Always review diffs before committing.

Level 3 has a security surface area. Every MCP connection is an integration point, and every integration point is a potential vulnerability. Be intentional about which servers you connect, what permissions you grant, and what data flows through the pipeline. I treat MCP connections with the same scrutiny I'd apply to any third-party API integration -- because that's exactly what they are.

The models aren't infallible at any level. Opus 4.6 is brilliant, but it still hallucinates. Sonnet 4.6 is fast, but it still misses nuance. The three-level framework makes Claude dramatically more capable, but it doesn't make Claude perfect. You're still the expert. Claude is the force multiplier.

I used to think the biggest AI skill was prompting. I was wrong. The biggest skill is knowing when to trust the output and when to verify it. That judgment only comes from experience, and no framework can shortcut it.

What Happens When You Use All Three Levels Together

Here's the picture I want to leave you with.

Last month, I took on a project that would have taken my solo practice two weeks to deliver. A client needed a full-stack web application with authentication, a dashboard, integration with their existing CRM, and deployment to AWS. Two weeks was their timeline. Tight but doable.

I delivered it in six days.

Level 1 handled the planning phase. Extended Thinking helped me architect the system. A Project held all the client requirements and tech specifications. Skills generated the API documentation and database schema.

Level 2 handled the building. Claude Code wrote the initial codebase, ran tests, caught bugs, and iterated on feedback -- all inside my terminal. Hooks ensured every commit passed linting and type checks. Custom Commands automated the repetitive parts of the development cycle.

Level 3 handled the integration. An MCP server connected Claude to the client's CRM API for data mapping. Another MCP server managed the AWS deployment pipeline. A third handled the notification system setup.

Six days. Not because the AI did everything -- it didn't. I made architecture decisions. I reviewed every critical piece of code. I handled the edge cases that required human judgment. But the AI handled the volume. The boilerplate. The repetitive testing cycles. The integration plumbing.

That's the real promise of the three-level framework. Not replacing your expertise. Amplifying it so dramatically that the limiting factor stops being your speed and starts being your ambition.

So here's my challenge to you: figure out which level you're operating at right now. Then spend the next two weeks pushing into the next one. Not because you need to reach Level 3 to be productive -- but because once you see what's possible at each level, you'll never want to go back to working without it.

FAQ

Frequently Asked Questions

Everything you need to know about this topic

Claude Code requires a Pro or Team subscription, and usage beyond included allowances is billed based on token consumption. The free tier gives you access to Claude Chat (Level 1) with usage limits. For serious development work, the Pro plan is the minimum I'd recommend.

Yes -- many community MCP servers can be installed and configured with minimal technical knowledge, often just editing a JSON config file. Building custom MCP servers requires coding ability, but using existing ones does not. See the MCP server directory for plug-and-play options.

Sonnet 4.6 handles 80% of coding tasks well and responds faster. Switch to Opus 4.6 for complex architecture decisions, multi-file refactoring, or debugging that requires deep reasoning across your codebase. For the full breakdown, see the model comparison section above.

Extended Thinking (Level 1, in Chat) gives Claude more time to reason through problems step by step. Ultra Think (Level 2, in Claude Code) provides a larger reasoning scratchpad specifically designed for multi-file, codebase-aware problems. Use Extended Thinking for general complex questions; use Ultra Think for complex code problems.

Let's Work Together

Looking to build AI systems, automate workflows, or scale your tech infrastructure? I'd love to help.

- Fiverr (custom builds & integrations): fiverr.com/s/EgxYmWD

- Portfolio: mejba.me

- Ramlit Limited (enterprise solutions): ramlit.com

- ColorPark (design & branding): colorpark.io

- xCyberSecurity (security services): xcybersecurity.io