I Gave Perplexity Computer My To-Do List for a Week

I watched an AI agent spin up eleven sub-agents in parallel, research competitors across three markets simultaneously, and deliver an interactive HTML dashboard — all while I was making coffee.

That's not a hypothetical. That happened on day two of testing Perplexity Computer, and honestly, it messed with my head a little. Not because the output was perfect (it wasn't), but because the speed of orchestration felt like glimpsing how work is going to function in eighteen months. Multiple AI models, coordinated by a single conductor, executing tasks that would have eaten half my afternoon.

Jack Roberts — entrepreneur, AI automation builder, the kind of guy who stress-tests tools instead of just demoing them — put Perplexity Computer through a series of real business scenarios recently. His review caught my attention because he didn't just kick the tires. He loaded the truck. Partnership research. Competitive intelligence dashboards. Reddit mining for business ideas. The tasks that actually matter to someone running a business, not the "write me a poem about a cat" benchmarks that tell you nothing.

I've spent the past week running my own tests alongside studying Jack's findings, and what I found is a product that's simultaneously impressive and frustrating — sometimes in the same task. The orchestration is genuinely novel. The gaps are genuinely annoying. And the pricing model will either make perfect sense or feel outrageous depending on exactly one thing I'll get to later.

Here's everything you need to know before deciding if Perplexity Computer deserves a slot in your workflow.

What Perplexity Computer Actually Is (Because the Name Is Confusing)

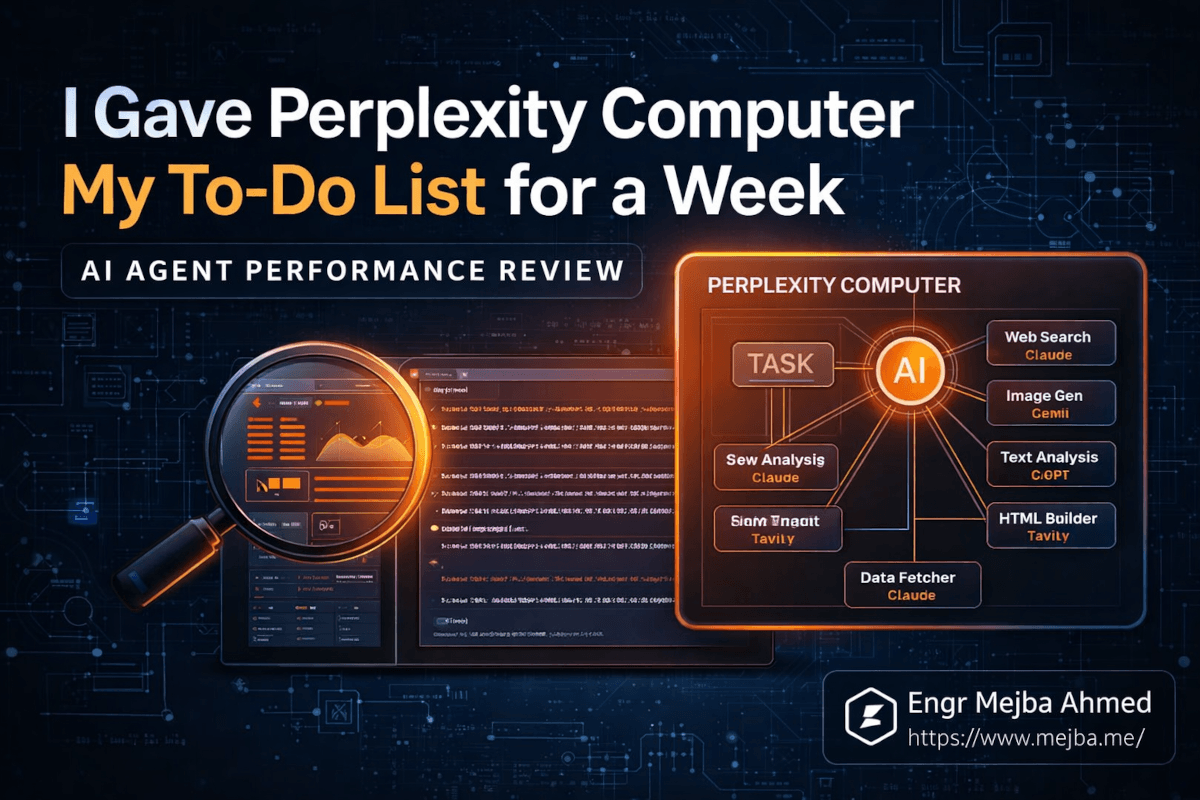

The name "Perplexity Computer" doesn't do this product any favors. It sounds like a hardware product, or maybe a rebranded laptop. It's neither. Perplexity Computer is an AI agent platform — think of it as a command center where you describe a complex task and an AI system breaks it down, delegates sub-tasks to specialized models, and assembles the results.

The architecture underneath is what makes it interesting. Claude sits at the center as the orchestrator. When you give Perplexity Computer a task, Claude analyzes what needs to happen, then spins up specialized sub-agents — each potentially using a different AI model — to handle individual pieces. One sub-agent might research companies via web search. Another generates images. A third drafts email copy. A fourth builds an HTML presentation from the collected data.

Twenty-plus models working in parallel, coordinated by one conductor. That's the pitch. And to be fair, that's roughly what happens in practice.

The integration layer is the second piece worth understanding. Perplexity Computer connects to Gmail, Google Drive, Notion, and other productivity tools through what they call "connectors." You authorize access — with granular permissions, which I appreciated — and the agent can then read your emails, create Notion pages, draft messages, and pull documents as part of its task execution.

If you've used Zapier or Make, the connector concept is familiar. The difference is that here, the AI decides when and how to use those connectors based on your instructions. You don't build a workflow. You describe what you want, and the system figures out the plumbing.

That sounds magical on paper. In practice, the "figuring out" part is where things get both exciting and complicated.

How Does Perplexity Computer Handle Real Business Tasks?

Jack Roberts tested three specific scenarios that mirror what an entrepreneur actually needs from an AI assistant. I ran similar tests. Here's what happened.

Test 1: Strategic Partnership Research

The prompt was straightforward: identify five potential strategic partners for an AI automation business, research each one, and deliver the findings as an HTML/CSS presentation.

Perplexity Computer broke this into parallel research threads. Multiple sub-agents went out simultaneously — searching for companies, pulling revenue data, identifying partnership structures, finding commission details. The results came back as a styled HTML document with company profiles, estimated partnership value, and contact information.

The good: the parallelization was genuinely fast. What would take a human researcher two to three hours of tab-switching and note-taking happened in minutes. The presentation formatting was clean enough to share with a team.

The not-so-good: the partner selection criteria were... broad. The agent cast a wide net rather than asking clarifying questions about what kind of partnerships mattered most. If you run an AI consultancy, there's a massive difference between "companies that offer affiliate programs" and "companies whose customer base overlaps with yours and whose product complements your service." Perplexity Computer defaulted to the first interpretation.

This is a pattern I noticed repeatedly, and I'll dig into why it matters in a moment.

Test 2: Competitive Intelligence Dashboard

This one impressed me most. The task: research ten speech-to-text companies, gather user counts, ratings, and pricing, then build an interactive dashboard.

The agent produced an actual working HTML dashboard with competitor profiles, pricing comparison tables, sentiment analysis from user reviews, and filterable data. Multiple models handled different aspects — one scraped pricing pages, another analyzed review sentiment, another built the visualization layer.

I have to be honest: the dashboard quality exceeded what I expected. It wasn't Tableau. But as a quick competitive snapshot you could drop into a strategy meeting? Absolutely usable. The sentiment analysis pulled real user complaints and praise points, not generic summaries.

The token cost for this task was roughly 400-500 credits out of the 10,000 monthly allotment on the Max plan. Reasonable for the output quality.

Test 3: Mining Reddit for AI Business Ideas

This was the most creative use case. The instruction: scan Reddit for high-engagement posts about AI pain points, then identify viable business opportunities.

The agent trawled multiple subreddits, identified recurring themes, and came back with a structured analysis. The most interesting finding — which both Jack and I found genuinely insightful — centered on AI hallucination anxiety. People aren't just annoyed by hallucinations. There's an emerging market around "trust infrastructure" for AI outputs. Verification layers, confidence scoring, human-in-the-loop checkpoints.

That's the kind of insight that takes a human analyst hours of Reddit scrolling to surface. The agent got there in minutes by processing volume that no single person could match.

But here's where things get interesting — and where the biggest limitation of Perplexity Computer reveals itself.

The Missing Conversation: Why No Clarification Changes Everything

Across all three tests, and across every task I ran during my week with the tool, Perplexity Computer never once asked me a clarifying question before executing.

Think about what happens when you hand a task to a sharp junior employee. They come back with questions. "When you say 'strategic partners,' do you mean technology integrations, referral partnerships, or co-marketing arrangements?" That back-and-forth takes five minutes and saves five hours of wasted work.

Perplexity Computer skips that step entirely. You give instructions, and it sprints. The agent interprets your prompt, makes assumptions about ambiguities, and executes at full speed. Sometimes those assumptions align with what you wanted. Sometimes they don't, and you've burned credits on output that misses the mark.

Jack flagged this as his primary frustration, and after a week of testing, I agree completely. The lack of an interactive planning phase before execution is Perplexity Computer's single biggest weakness.

Here's why it matters practically: when you're paying per-credit and the agent consumes 300-500 credits per complex task, a wrong interpretation isn't just annoying — it's expensive. I burned roughly 200 credits on a research task that went sideways because the agent interpreted "high-growth SaaS companies" as "companies with the most employees" rather than "companies with the fastest revenue growth rate." A ten-second clarification would have prevented that.

The fix seems obvious: add a planning step where the agent outlines its approach and asks for confirmation before executing. Claude already does this well in other contexts. The absence here feels like a deliberate speed-over-accuracy trade-off, and I think it's the wrong one for tasks that cost real money.

That said — and this is the nuance most reviews miss — the lack of clarification is only a problem if your prompts are ambiguous. When I wrote extremely specific instructions with explicit criteria, output format requirements, and scope boundaries, the results were consistently strong. The tool rewards precision. Vague prompts get vague results. Detailed prompts get detailed results.

Which brings us to the skill that actually determines whether Perplexity Computer is worth $200 a month for you.

The Prompt Engineering Tax: What Nobody Tells You About AI Agents

There's an uncomfortable truth about every AI agent tool on the market right now, and Perplexity Computer is no exception: the time you save on execution, you partially pay back in prompt engineering.

Writing a good prompt for Perplexity Computer isn't like writing a ChatGPT prompt. You're not having a conversation. You're writing a brief. A project specification. The more precisely you define success criteria, output format, scope boundaries, and quality thresholds, the better the results.

Here's an example. This prompt produces mediocre results:

Research my competitors in the AI automation space and create a summary.

This prompt produces genuinely useful output:

Research 8 companies that offer AI workflow automation for small businesses

(under 500 employees). For each company, find:

- Founding year and total funding raised

- Primary product offering and pricing tiers

- Number of integrations supported

- G2 or Capterra rating (most recent)

- One specific customer complaint from reviews

Output as an HTML table with sortable columns. Include a 2-paragraph

executive summary at the top highlighting the biggest gap in the market

that none of these companies adequately address.

The second prompt took me three minutes to write. The output it generated would have taken me four to five hours to compile manually. That's still a massive net time savings. But the three minutes of prompt crafting matter, and if you're the kind of person who wants to type "do my research" and get exactly what you need, you'll be disappointed.

I've started keeping a prompt template library for recurring Perplexity Computer tasks. Partnership research. Competitive analysis. Content opportunity identification. Each template took fifteen to twenty minutes to refine through iteration. Now I swap in specifics and run them. The upfront investment pays for itself within two or three uses.

If you'd rather have someone build this kind of AI automation workflow from scratch — prompt templates, connector setup, the whole system — I take on exactly these kinds of projects. You can see what I've built at fiverr.com/s/EgxYmWD.

But the templates only partially solve the problem. The real question is whether Perplexity Computer's execution quality justifies the premium pricing.

Breaking Down the $200 Question

Perplexity Computer's pricing is binary. The free tier gives you basic Perplexity search — no Computer access, no agent capabilities. To use anything I've described in this article, you need the Max plan at $200 per month.

That $200 gets you 35,000 bonus credits (one-time) plus 10,000 monthly credits. Based on my testing, a moderately complex task — competitive research, content generation, email drafting — consumes between 200 and 500 credits. Simple tasks like summarization or single-query research use 50-100 credits.

Let me do the math that matters. Jack's three test tasks consumed roughly 1,300 credits total, about 13% of the monthly allotment. That means you could run approximately 20-25 similar complex tasks per month before hitting the credit wall.

Is that worth $200? It depends entirely on what your time is worth and what you're replacing.

Scenario A: You're replacing manual research. If you currently spend 3-4 hours per week on competitive research, market analysis, or prospect identification, and Perplexity Computer cuts that to 30 minutes of prompt writing plus review, you're reclaiming 10-14 hours per month. At any reasonable hourly rate, $200 is a bargain.

Scenario B: You're replacing existing AI tools. If you're already using ChatGPT Pro ($20/month) and a separate research tool like Tavily or SerpAPI, the comparison gets tighter. Perplexity Computer's orchestration adds genuine value over single-model tools for multi-step tasks. But for simple queries, it's overkill.

Scenario C: You're exploring out of curiosity. Don't. The free tier is fine for basic search. The $200 jump only makes sense if you have specific, recurring multi-step tasks that currently eat your time.

The 35,000 bonus credits on signup are a smart move by Perplexity. They give you enough runway to test complex workflows without feeling credit-anxious during your first month. After that bonus depletes, the 10,000 monthly credits require more careful budgeting.

One thing I wish they offered: a middle tier. Something around $50-80/month with 3,000-4,000 credits for people who need Computer access occasionally but don't run enough complex tasks to justify the full Max plan. The jump from free to $200 is steep enough to stop a lot of potential users who'd happily pay $75.

The Security Model That Actually Makes Sense

I almost skipped this section, which would have been a mistake. Perplexity Computer's security approach is one of its strongest — and most underappreciated — features.

When you connect Gmail, Notion, or Google Drive, the system follows what security professionals call the "principle of least access." The agent requests only the minimum permissions needed for the specific task. Connect Gmail for email drafting? It gets compose access, not full mailbox read access. Link Notion for page creation? It doesn't get access to your entire workspace.

As someone who works in cybersecurity, this matters enormously. Most AI agent platforms request broad OAuth scopes because it's easier for developers. Perplexity's approach suggests their security team actually thought about threat models instead of just shipping the fastest integration.

The custom instructions field — capped at 1,500 characters — is another subtle security feature. It constrains what the agent can do without explicit per-task prompting. You can set guardrails like "never send emails without showing me a draft first" or "don't access any Google Drive files in the Finance folder." These persistent instructions act as a policy layer between you and the agent's autonomous execution.

Is it bulletproof? No. Any tool with access to your email and documents carries inherent risk. But Perplexity's implementation is more thoughtful than most competitors I've evaluated. They're building trust through constraint, which is the right instinct.

What Claude's Orchestration Role Means for the AI Agent Landscape

Here's the thing that fascinates me most about Perplexity Computer, and it has nothing to do with features or pricing.

Claude — Anthropic's model — serves as the orchestrator. The brain that decomposes tasks, assigns sub-agents, and synthesizes results. Not GPT. Not Gemini. Claude. This is a significant architectural choice with implications worth thinking about.

Claude's strength has always been in structured reasoning and instruction-following. It's the model I reach for when I need something to carefully parse a complex brief and execute methodically rather than creatively riff. That makes it an excellent choice for orchestration, where the primary job is decomposition and delegation rather than generation.

What's happening underneath is a kind of AI team structure. Claude acts as the project manager. Specialized models act as individual contributors — one handles web research, another handles image generation, another handles data visualization. The project manager doesn't need to be the best at any individual skill. It needs to be the best at understanding what skills are needed and coordinating them.

I've built similar multi-agent architectures using Claude Code with agent teams, and the pattern works. The orchestrator model matters less for its raw capability and more for its reliability in following complex instructions without drift. Claude is arguably the best model available for that specific job right now.

This architecture also means Perplexity Computer improves whenever any of its component models improve. A better image generation model drops? The agent gets better at visual tasks without Perplexity changing a line of code. A faster research model launches? Integration improves research speed. The platform becomes a beneficiary of the entire AI ecosystem's progress, not just one model vendor's roadmap.

That's a smart bet. And it's why I think Perplexity Computer — or something very like it — represents where AI tools are heading in 2026 and beyond.

The "AI Employee" Mental Model: Useful or Dangerous?

Jack Roberts frames Perplexity Computer as an "AI employee" where you play CEO. Give strategic direction, delegate execution, review results. It's a compelling metaphor, and I've caught myself thinking in those terms after a week of use. But I want to push back on it slightly, because the metaphor has a failure mode.

Real employees learn. They build context over time. They remember that last quarter's partnership push with Company X fell apart because of pricing disagreements, so they adjust this quarter's approach. They notice patterns across tasks and proactively flag things.

Perplexity Computer doesn't do any of that. Each task is stateless. The agent doesn't remember what it did yesterday. It doesn't learn your preferences across sessions (beyond the 1,500-character custom instructions). It doesn't proactively suggest tasks based on patterns it's observed.

This makes the "employee" framing misleading in a specific way: it sets expectations for contextual intelligence that the tool can't deliver. A better mental model is "AI contractor." You write a detailed brief. The contractor executes it skillfully. They deliver results and walk away. Next time, you write another brief from scratch.

That reframing changes how you use the tool. You stop expecting it to "know" things and start investing in better briefs. You build your own institutional memory (in prompt templates, in saved outputs, in Notion wikis) rather than expecting the tool to maintain it for you.

I actually think this is fine. A reliable contractor who executes well from a clear brief is enormously valuable. But pretending it's an employee creates frustration when it forgets everything between sessions.

The custom instructions help bridge this gap partially. I used mine to encode preferences: "Always format competitor research as tables. Always include pricing data denominated in USD. Always flag when a source is older than 6 months." These persistent instructions create a thin layer of "memory," and I recommend spending all 1,500 characters thoughtfully.

What I'd Do Differently After a Week

If I were starting fresh with Perplexity Computer today, knowing what I know now, here's exactly what I'd change about my approach.

First, I'd spend day one on prompt templates, not tasks. My first day was scattered — testing random capabilities, burning credits on exploration. That's natural but expensive. Instead, identify your three to five most common research or analysis tasks and spend the first session refining prompts for each. Test, adjust, test again. Those templates become your ROI multiplier for every future session.

Second, I'd set up connectors on day one but use them on day three. The Gmail and Notion integrations are powerful, but I found that tasks combining research and tool actions (like "research competitors and email me a summary") consumed more credits and produced worse results than tasks doing one thing well. Start with pure research and generation tasks. Add connector-based actions once you understand how the agent interprets your instructions.

Third, I'd write a custom instruction set immediately. Don't leave those 1,500 characters empty. Mine now includes output format preferences, quality thresholds, source requirements, and explicit instructions to never send emails without showing me the draft first. This small investment improved output quality across every subsequent task.

Fourth, I'd keep a credit budget spreadsheet. It sounds tedious. It took me ten minutes to set up. But tracking credit consumption per task type revealed that some tasks were dramatically more credit-efficient than others. Competitive research: high value per credit. Email drafting: low value per credit (I can draft emails faster myself). That visibility changed how I allocated my monthly budget.

Fifth, I'd pair it with a human review step and never skip it. The agent's outputs are good starting points. They're rarely finished products. Every dashboard, every research summary, every email draft needed human editing — tightening analysis, correcting subtle errors in company data, adjusting tone. Budget fifteen to twenty minutes of review per complex task output.

Where This Goes Next (And Why I'm Watching Closely)

Perplexity Computer as it exists in March 2026 is a version 1.0 product wearing a version 2.0 price tag. The core orchestration technology is genuinely impressive. The execution quality on well-prompted tasks is high. The security model is thoughtful. The integrations work.

But the missing pieces are significant. No interactive planning. No cross-session memory. No preference learning. No ability to ask "hey, did you mean X or Y?" before burning credits on a task. These aren't edge cases — they're the features that separate a powerful tool from a reliable team member.

Here's my prediction: within six months, Perplexity will add a planning step. Some form of "here's my understanding of your task, here's my plan, confirm before I execute." The credit-burn feedback loop is too painful for enough users that the feature request will be unavoidable. When that lands, the value proposition jumps significantly.

The broader trend matters more than any single product. AI agents that orchestrate multiple models in parallel, connected to your real productivity tools, executing multi-step tasks autonomously — that's the trajectory. Perplexity Computer is one implementation of that vision. Google's Project Mariner is another. OpenAI's agent framework is a third. The concept is converging across the industry.

What separates winners from also-rans in this space won't be raw capability. It'll be the user experience around that capability. How well does the agent communicate what it's doing? How gracefully does it handle ambiguity? How efficiently does it use credits? How trustworthy does it feel with access to your email?

On those dimensions, Perplexity Computer scores unevenly. Strong on trust and transparency. Weak on communication and ambiguity handling. Solid on capability. Expensive but defensible on pricing.

Should You Actually Subscribe?

I'll make this concrete.

Subscribe if you run a business or freelance practice where you regularly need competitive research, market analysis, prospect identification, or content generation across multiple sources. If you're spending five or more hours per week on multi-step research tasks that could be described in a written brief, Perplexity Computer will pay for itself within the first month.

Don't subscribe if you mainly need single-query answers, conversational AI, or writing assistance. ChatGPT, Claude, or Perplexity's free search tier handle those use cases at a fraction of the cost. The $200 monthly commitment only makes sense when you're consistently running complex, multi-step agent tasks.

Wait if you're intrigued but don't have an immediate use case. The product will be meaningfully better in six months. The planning step will arrive. The credit efficiency will improve. Early adopters are paying a premium to beta-test the workflow — and that's fine if you need the capability now, but unnecessary if you're just exploring.

After a week of testing, I'm keeping my subscription for another month. The competitive intelligence workflows alone have saved me enough time to justify the cost. But I'm keeping it on a short leash. If my prompt templates stop evolving — if I stop finding new valuable use cases — I'll drop back to the free tier without hesitation.

The technology direction is exciting. The current product is useful but incomplete. And the question that'll determine whether Perplexity Computer becomes an essential tool or a footnote isn't about AI capability. It's about whether Perplexity builds the human-AI communication layer that transforms a powerful engine into a trustworthy collaborator.

They're not there yet. But they're closer than anyone else I've tested.

FAQ

Frequently Asked Questions

Everything you need to know about this topic

Perplexity Computer is an AI agent platform that uses Claude as a central orchestrator to coordinate 20+ specialized AI models in parallel. You describe a complex task, and the system decomposes it into sub-tasks handled by different models, then assembles the results. For the full architecture breakdown, see the section on what Perplexity Computer actually is above.

The Max plan costs $200/month and includes 35,000 one-time bonus credits plus 10,000 monthly credits. A moderately complex task uses 200-500 credits, meaning you can run roughly 20-25 complex tasks per month. There is no middle pricing tier — the free plan excludes Computer access entirely.

Perplexity Computer uses a principle-of-least-access security model, requesting only the minimum permissions needed for each connected service. Gmail integration grants compose access without full mailbox read permissions. You can set persistent custom instructions to add guardrails like requiring draft review before sending.

For multi-step research requiring parallel execution across multiple sources, Perplexity Computer outperforms single-model tools like ChatGPT. For simple questions, conversational AI, or writing assistance, ChatGPT at $20/month is more cost-effective. The value gap widens as task complexity increases.

No. Each task is stateless — the agent doesn't retain context between sessions. You can partially work around this with the 1,500-character custom instructions field, which persists across tasks. For deeper context persistence, maintain your own prompt template library and reference documentation externally.

Let's Work Together

Looking to build AI systems, automate workflows, or scale your tech infrastructure? I'd love to help.

- Fiverr (custom builds & integrations): fiverr.com/s/EgxYmWD

- Portfolio: mejba.me

- Ramlit Limited (enterprise solutions): ramlit.com

- ColorPark (design & branding): colorpark.io

- xCyberSecurity (security services): xcybersecurity.io