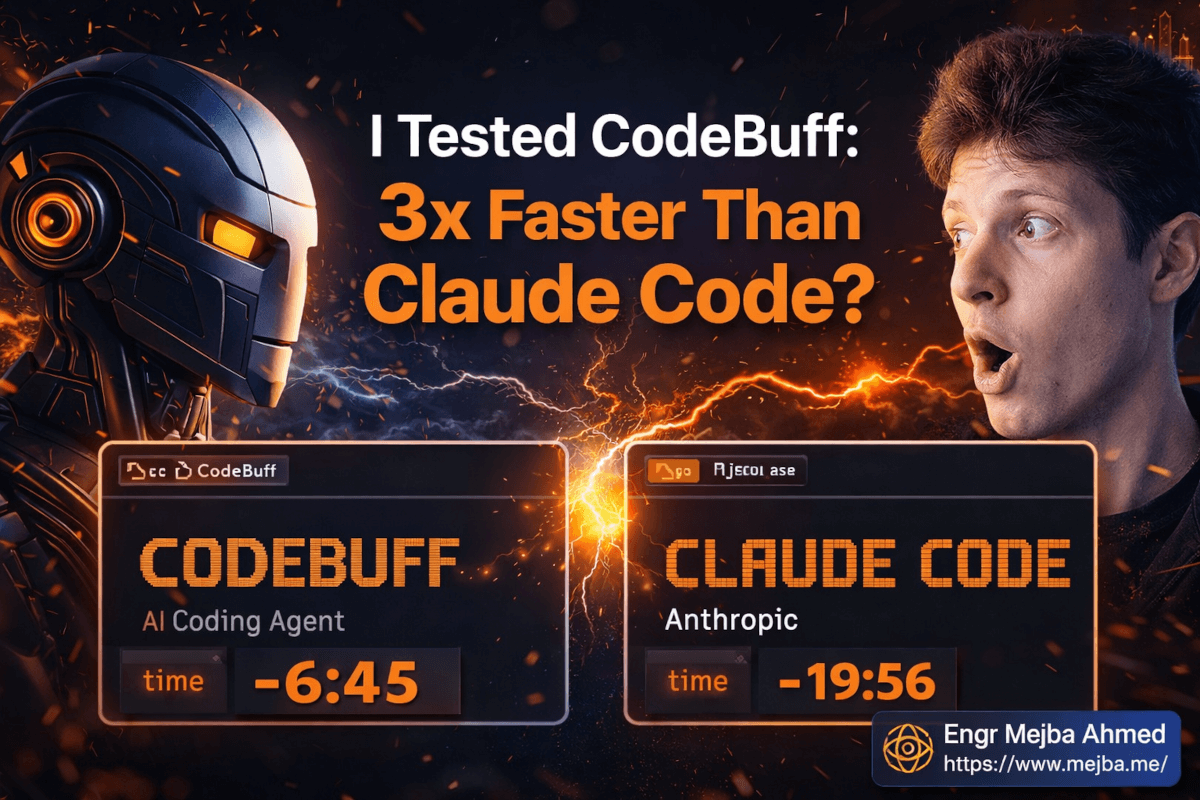

Ich Testete CodeBuff: 3x Schneller als Claude Code?

Sechs Minuten und fünfundvierzig Sekunden.

So lange brauchte CodeBuff, um ein Feature zu bauen, für das Claude Code fast zwanzig Minuten benötigte. Gleiche Aufgabe. Gleiche Maschine. Derselbe Entwickler hinter der Tastatur — ich, mit einer Stoppuhr und einer gesunden Portion Skepsis.

Der Teil, der wirklich schmerzte? CodeBuffs Output lief beim ersten Mal sauber durch. Meine Claude Code-Sitzung benötigte zwei Korrekturdurchläufe, bevor es ordentlich funktionierte.

Ich werde direkt mit dir sein: Ich bin in diesen Test gegangen und hoffte, dass CodeBuff mich enttäuschen würde. Ich hatte monatelange Workflow-Investitionen in Claude Code — benutzerdefinierte Agenten, Slash Commands, Hooks, ein ganzes Ökosystem, das ich nach meiner Denkweise geformt hatte. Wechselkosten sind real. Also als ich CodeBuff installierte und anfing zu experimentieren, suchte ich aktiv nach den Rissen.

Ich fand einige. Aber nicht genug, um zu ignorieren, was dieses Tool architektonisch macht.

Was mich umstimmte, war nicht der Geschwindigkeits-Benchmark. Geschwindigkeit ist die offensichtliche Schlagzeile. Das Ding, das die meisten Reviews nicht erklären, ist warum CodeBuff schneller ist — und dieser Grund hat Auswirkungen darauf, wie alle KI-Coding-Tools in zwei Jahren funktionieren werden. Ich komme gleich dazu, aber lass mich zuerst erklären, was CodeBuff eigentlich ist, denn die meisten Berichte, die ich gelesen habe, liegen dabei fundamental falsch.

Das Problem, das Jedes KI-Coding-Tool Ignoriert

Jeder große KI-Coding-Agent teilt eine verborgene Annahme: Ein Modell verarbeitet dein gesamtes Problem. Ein Kontextfenster. Ein Reasoning-Durchlauf. Ein Satz blinder Flecken.

So arbeitet ein Solo-Entwickler. Und Solo-Entwickler, selbst brillante, haben einen gut dokumentierten Fehlermodus — sie stecken in ihrem eigenen mentalen Modell fest. Wenn du Code schreibst und dann denselben Code bewertest, liest du ihn so, wie du beabsichtigt hast, dass er funktioniert, nicht so, wie er tatsächlich ausgeführt wird. Du verpasst den Grenzfall, den du nicht bedacht hast. Du übersiehst die Kopplung, die für jemanden, der die Codebase frisch betrachtet, offensichtlich ist.

Deshalb gibt es Software-Teams. Du hast eine Person, die die Architektur plant, eine andere, die die Implementierung schreibt, eine andere, die auf Bugs überprüft, eine andere, die Leistungsannahmen verifiziert. Die Rollen existieren, weil strukturierte Zusammenarbeit auffängt, was individuelles Denken verpasst.

CodeBuff schaute sich das an und stellte die Frage, die keines der anderen Tools stellte: Was, wenn ein KI-Coding-Agent wie ein Team strukturiert wäre statt wie ein Solo-Angestellter?

Die Antwort ist ein Multi-Agent-System. Mehrere spezialisierte Sub-Agenten mit unterschiedlichen Rollen — Planung, Implementierung, Code-Review — die koordinieren, um eine Ausgabe zu produzieren, die aus mehr als einem Blickwinkel durchdacht wurde, bevor sie dich erreicht. Apache 2.0-Lizenz, in zwei Minuten installierbar, läuft Anthropics Opus 4.6 (oder Minimax M2.5 auf dem kostenlosen Tier) abhängig von deinem Plan.

Ich baue seit etwa zwei Jahren ernsthaft mit KI-Agenten — Automatisierungs-Pipelines, Claude-Integrationen, Multi-Agent-Workflows für Kunden bei Ramlit. Als CodeBuff also auf meinem Radar auftauchte, näherte ich mich ihm nicht als jemand, der die Reifen kickt. Ich hatte echte Projekte, um es damit zu testen, und echte Vergleiche zu machen.

Hier ist, was mich zwei Wochen echter Nutzung gelehrt haben.

Warum die Architektur die eigentliche Geschichte ist

Der einfachste Weg, CodeBuffs Multi-Agent-Setup zu verstehen, ist darüber nachzudenken, was passiert, wenn du Code alleine debuggst versus mit einem Pair-Partner.

Alleine debuggen bleibst du in deinen eigenen Annahmen stecken. Du hast den Code geschrieben, also liest du ihn wohlwollend. Dein Pair-Partner — der gerade ohne vorherigen Kontext an deinen Bildschirm gesetzt wurde — findet das Problem in dreißig Sekunden, weil er dein mentales Modell nicht eingebacken hat. Frische Augen sehen, was Vertrautheit verbirgt.

CodeBuffs Sub-Agenten arbeiten nach demselben Prinzip. Ein Agent generiert eine Lösung. Ein separater Editor-Agent überprüft diesen Code unabhängig — prüft auf Bugs, Grenzfälle und logische Fehler — ohne den Implementierungskontext, der dazu führen könnte, Probleme wegzurationalisieren. Diese beiden Agenten teilen nicht dieselbe Reasoning-Kette. Das Review ist wirklich separat.

Im Plan Mode läuft ein Planungs-Agent, bevor irgendeine Implementierung stattfindet. Er stellt klärende Fragen, skizziert einen Ansatz und übergibt eine Spezifikation an den Implementierungs-Agenten. Der Implementierungs-Agent baut gegen ein strukturiertes Briefing statt gegen eine offene Eingabeaufforderung — was einen signifikanten Unterschied in der Ausgabe-Konsistenz macht.

Max Plan erweitert dies weiter. Mehrere Sub-Agenten generieren gleichzeitig parallele Kandidaten-Lösungen. CodeBuff wertet sie automatisch aus und liefert die stärkste Ausgabe. Das TUI — CodeBuffs interaktives Terminal-Interface — zeigt dir den Status jedes Agenten in Echtzeit. Du kannst die parallele Generierung beobachten, Code-Diffs erscheinen sehen, den Review-Durchlauf beobachten, der Probleme abfängt, bevor sie dich erreichen.

Als ich zum ersten Mal Max Plan ausführte und drei Agenten sah, die parallel an verschiedenen Lösungspfaden arbeiteten, war meine Reaktion irgendwo zwischen "das ist chaotisch" und "oh, das ist eigentlich, wie das Problem angegangen werden sollte." Wenn du Zeit damit verbracht hast, KI-generierten Code zu debuggen, der zuversichtlich in die falsche Richtung gegangen ist, fühlt sich die Idee mehrerer paralleler Versuche mit automatischer Auswahl weniger wie Overhead an und mehr wie Versicherung.

Die Buffbench-Zahlen und was sie wirklich bedeuten

CodeBuffs interner Benchmark — Buffbench — lief über 175 reale Engineering-Aufgaben. Keine konstruierten Spielzeugprobleme. Aufgaben mit Multi-Turn-Gesprächen, dem Rekonstruieren echter Git-Commits, dem Bauen von Features gegen echte Codebases mit bestehenden Mustern und Einschränkungen.

Diese Unterscheidung ist wichtig. Bereinigten Benchmark-Aufgaben schmeicheln jedem Tool. Die interessanten Misserfolge passieren am Montagmorgen, wenn der Authentifizierungsfluss eines Kunden kaputt ist, die Codebase vier Jahre angesammelter Entscheidungen hat und das Modell widersprüchlichen Kontext gleichzeitig halten muss. Das ist, wenn Single-Agent-Tools ihre Grenzen zeigen.

Das Hauptergebnis: bis zu dreimal schneller als Wettbewerber einschließlich Claude Code über diese Aufgaben, mit höherer Ausgabequalität.

Der Feature-Build, den ich am Anfang erwähnte — 20 Minuten für Claude Code versus 6 Minuten 45 Sekunden für CodeBuff — wurde nicht aus einem Best-Case-Durchlauf herausgepickt. Diese Größenordnung hielt über den Benchmark an. Und die Qualitätslücke war real: CodeBuffs Ausgaben erforderten weniger Follow-up-Prompting zur Korrektur.

Hier ist der Punkt, zu dem ich immer wieder zurückkam: Geschwindigkeit ist nützlich, aber Geschwindigkeit aus der falschen Architektur ist irreführend. Wenn ein Tool schnell ist, weil es Reasoning-Schritte überspringt, zahlst du für diese Geschwindigkeit später in Korrekturdurchläufen. Was CodeBuff tut — parallele Agenten mit fokussierten Kontexten laufen lassen, dann die Ausgabe überprüfen — ist schnell, weil die Architektur solide ist, nicht weil es Abkürzungen nimmt.

Der architektonische Einblick ist dieser: Spezialisierte Agenten mit engen Kontexten übertreffen generalistische Agenten mit aufgeblähten Kontexten. Das ist der eigentliche Grund für den Geschwindigkeitsunterschied. Wenn eine Aufgabe komplexer wird, füllt sich der Kontext eines einzelnen Modells mit angesammelten Entscheidungen, früheren Annahmen und Rauschen aus dem Gesprächsverlauf. Das Modell beginnt, Kohärenz zu verlieren. CodeBuffs Sub-Agenten halten jeweils einen fokussierten Kontext, was bedeutet, dass sie bei komplexen Aufgaben länger klar bleiben.

Die vier Modi verstehen

CodeBuff ist keine einheitliche Einstellung. Der Modus, den du wählst, beeinflusst sowohl die Ausgabequalität als auch die Token-Kosten, und den falschen Modus für eine Aufgabe zu verwenden ist ein echter Fehler.

Kostenloses Tier (Minimax M2.5): Fähig für Front-End-Arbeit und unkomplizierte Aufgaben. Leichteres Modell bedeutet schnellere Ausführung und niedrigere Kosten. Für CSS-Anpassungen, Standard-Komponenten-Scaffolding und einfache Hilfsfunktionen erledigt dieses Tier die Arbeit. Verwende es nicht für etwas, das tiefes Reasoning über Geschäftslogik oder komplexes Zustandsmanagement erfordert — dort wird die Qualitätslücke zu Opus 4.6 sichtbar.

Standard (Opus 4.6): Dein täglicher Fahrer für ernsthafte Arbeit. Ein Implementierungs-Agent, ein Editor-Agent, der Code-Review macht. Moderater Token-Verbrauch. Dieses Tier verarbeitete etwa 80% meiner echten Testfälle, ohne dass ich eskalieren musste. Für die meisten Entwicklungsaufgaben bei echten Projekten ist Standard der richtige Ausgangspunkt.

Plan Mode (Opus 4.6): Bevor eine Codezeile geschrieben wird, stellt der Planungs-Agent dir Fragen. Gute Fragen — die Art, die ein durchdachter Senior-Entwickler stellen würde, bevor er sich auf einen Ansatz festlegt. Geltungsbereichsgrenzen, Grenzfallbehandlung, Integrations-Einschränkungen, Fehlermodi. Deine Antworten formen das Implementierungs-Briefing. Der Implementierungs-Agent baut dann gegen dieses Briefing, statt Intent aus einer offenen Eingabeaufforderung abzuleiten.

Dieser Modus fing Dinge ab, über die ich nicht nachgedacht hatte. Mehr dazu im Implementierungsabschnitt.

Max Plan (Opus 4.6, parallele Agenten): Mehrere Sub-Agenten generieren parallele Lösungen, automatische Auswahl der besten Ausgabe. Höchste Qualität, höchste Token-Kosten. Reserviere dies für komplexe, hochriskante Aufgaben, bei denen die Token-Ausgabe durch die reduzierte Iterationszeit und den Qualitätsunterschied gerechtfertigt ist.

Preise laufen bei $100/Monat für 1x Token, $200/Monat für 3x und $500/Monat für 8x. Diese Tiers existieren, damit Volumen keine Mauer wird. Die Rechnung geht auf, wenn du ernsthafte tägliche Nutzung bei komplexen Projekten machst — aber ich würde dringend empfehlen, mit dem kostenlosen Tier zu beginnen, ein Gefühl für das Tool bei deiner tatsächlichen Arbeitslast zu bekommen und dann hochzuskalieren, sobald du weißt, wo CodeBuff den größten Wert für dich spezifisch liefert.

Du fragst dich wahrscheinlich: Demos sind eine Sache, aber wie hält das bei echter Projektarbeit stand? Hier ist genau, was ich gebaut habe und was passierte.

Ein KI-Agent-Monitoring-Dashboard von Grund auf Bauen

Mein Testprojekt war ein KI-Agent-Monitoring-Dashboard — Echtzeit-Statusverfolgung, Agent-Ausführungsverlauf, WebSocket-Verbindungen, ein Frontend, das unter gleichzeitigen Agent-Updates lesbar bleibt. Die Art von Umfang, die in einem Satz sauber klingt und schnell kompliziert wird, sobald man mit Reconnection-Logik, optimistischen UI-Updates und Zustandsbaumverwaltung für verschachtelte Agenten umzugehen beginnt.

Ich führte dies auf Max Plan aus. Hier ist der tatsächliche Sitzungsablauf.

Die Knowledge-Datei einrichten

Bevor ich irgendetwas ausführte, erstellte ich eine knowledge.md-Datei im Projektstamm. CodeBuff verwendet dies als persistenten Kontext über dein Projekt — Tech-Stack, Konventionen, Einschränkungen, alles, was der Agent wissen sollte, was noch nicht in der Codebase steht.

Meins sah so aus:

# Project Context

## Tech Stack

- Backend: Node.js + Express + WebSocket (ws library)

- Frontend: React + TypeScript + Tailwind CSS

- Database: PostgreSQL via Prisma ORM

## Conventions

- Functional components only, no class components

- Error handling: return typed error objects, never throw raw strings

- API responses follow { data, error, meta } structure consistently

## Constraints

- No third-party state management libraries — React Query + local state only

- WebSocket connections must handle reconnection automatically with exponential backoff

- All agent status updates should be optimistic (update UI before server confirmation)

- Agent hierarchy supports nesting: agents can spawn sub-agents

Diese Datei machte einen messbaren Unterschied in der Ausgabequalität. Der erste Planungsdurchlauf des Agenten referenzierte meine Stack-Auswahlen direkt, verwendete meine Fehlerbehandlungskonvention ohne Aufforderung und markierte einen potenziellen Konflikt zwischen meiner "keine Drittanbieter-Zustandsverwaltung"-Einschränkung und der Komplexität des verschachtelten Agenten-Zustands — was genau das Richtige war zu markieren.

Zuerst Plan Mode ausführen

Ich begann mit Plan Mode statt direkt zur Implementierung zu springen. Der Planungs-Agent kam mit fünf Fragen zurück:

- Soll das Dashboard das direkte Spawnen neuer Agenten unterstützen oder nur bestehende anzeigen?

- Was ist die erwartete Agenten-Skalierung — Dutzende oder potenziell Hunderte mit Sub-Agenten?

- Muss der Ausführungsverlauf über Browser-Sitzungen hinweg persistieren oder ist In-Memory ausreichend?

- Gibt es eine Anforderung für rollenbasierte Zugriffskontrolle auf das Agenten-Management?

- Wie soll das Dashboard Agent-Fehler anzeigen — stiller Log-Eintrag, Toast-Benachrichtigung oder ein dediziertes Status-Panel?

Das sind keine Fragen, die eine generische KI für jedes Projekt generiert. Das sind die Fragen, die bestimmen, ob die Architektur standhält. Als ich sagte "ja, Agenten können Sub-Agenten vom Dashboard aus spawnen," merkte der Planungs-Agent sofort an, dass WebSocket-Zustandsverwaltung eine Baumstruktur braucht, keine flache Liste — und baute die Implementierungsspezifikation entsprechend auf.

Diese Spezifikation wurde zum Briefing für den Max Plan-Durchlauf.

Max Plan bei der Arbeit beobachten

Die parallele Ausführung durch das TUI ist beim ersten Mal wirklich etwas zu sehen. Drei Agenten, die gleichzeitig an verschiedenen Lösungspfaden arbeiten, Diffs erscheinen in Echtzeit, der Review-Durchlauf des Editor-Agenten sichtbar während er läuft. Es fühlte sich chaotisch an, bis ich verstand, was ich sah — dann fühlte es sich an wie ein Team bei der Arbeit zu beobachten.

Gesamte verstrichene Zeit von "Implementierung starten" bis "Frontend und Backend laufen beide lokal": 12 Minuten.

Meine Vor-Experiment-Schätzung für dieses Projekt mit meinem Claude Code-Workflow war 30 Minuten. Diese Schätzung war wahrscheinlich optimistisch — ich habe ähnlich umfangreiche Projekte vorher gemacht und sie neigen dazu, länger zu dauern. CodeBuff schaffte es in weniger als der Hälfte der erwarteten Zeit, und die Ausgabe-Reviewzusammenfassung fing einen Grenzfall ab, den ich beim Testen gefunden hätte: die Reconnection-Logik musste den Fall behandeln, wo die WebSocket-Verbindung eines übergeordneten Agenten abbricht, während ein untergeordneter Agent sich mitten in der Ausführung befindet. Die Zusammenfassung markierte es, erklärte, wie es behandelt wurde, und verwies mich auf den relevanten Code-Abschnitt.

Pro-Tipp: Lies die Zusammenfassung. Es ist kein Boilerplate. Die Erklärung des Agenten, was er gebaut hat und warum, ist der Ort, wo du die eine Entscheidung abfängst, die du ändern möchtest, bevor sie sich durch die Codebase ausbreitet. Ich speichere diese Zusammenfassungen in einer decisions.md-Datei in jedem Projekt.

Häufige Probleme und wie man damit umgeht

Das Laden großer Codebase-Kontexte kann beim ersten Durchlauf langsam sein, und gelegentlich verpasst der Agent Dateien in tiefen Verzeichnisstrukturen. Die Lösung sind spezifische knowledge.md-Einträge, die explizit auf die richtigen Verzeichnisse zeigen — verlasse dich nicht auf automatische Erkennung für komplexe Strukturen.

Kostenloses Tier, das bei komplexen Aufgaben schwache Ergebnisse liefert, ist fast immer eine Modus-Fehlanpassung. Wenn du die Grenzen von CodeBuff auf einem kostenlosen Tier testest und enttäuschende Ergebnisse erhältst, bittest du wahrscheinlich Minimax M2.5, etwas zu tun, das Opus 4.6 braucht. Wechsle zu Standard, bevor du zum Schluss kommst, dass das Tool underperformt.

Plan Mode stellt mehr Fragen als du bei einfachen Aufgaben möchtest: überspringe es. Plan Mode ist für Aufgaben konzipiert, bei denen falsche Annahmen dich erhebliche Nacharbeitszeit kosten. Einzeldatei-Änderungen und einfache Feature-Ergänzungen brauchen es nicht. Passe den Modus der Komplexität der Arbeit an.

Die Teile, mit denen ich weniger komfortabel bin

Okay, hier höre ich auf, Produktrezensent zu sein, und fange an, ehrlich zu sein.

CodeBuff ist kein Claude Code-Ersatz für jeden Workflow. Mein Claude Code-Setup hat Integrationen, die in CodeBuffs Ökosystem noch nicht existieren — benutzerdefinierte Agenten-Konfigurationen, spezifische Slash Commands, Hooks, die ich gebaut habe und die sich in mein Projektmanagement-Setup integrieren. Wenn du sechs Monate damit verbracht hast, einen Claude Code-Workflow aufzubauen, portiert diese Investition nicht sauber. Die Wechselkosten sind real.

Die Token-Ökonomie verdient einen klareren Blick, als die meisten Reviews ihr geben. Max Plan bei komplexen Aufgaben erzeugt erheblichen Token-Verbrauch — du lässt parallele Opus 4.6-Agenten laufen, jeweils mit eigenem Kontext, gleichzeitig. Wenn du dies den ganzen Tag als täglichen Fahrer für komplexe Projekte behandelst, ist das $200-500/Monat-Tier, wo du realistischerweise landen wirst. Das ist kein Dealbreaker für professionelle Entwickler, aber es ist auch nicht nichts.

Meine wirklich unpopuläre Meinung: CodeBuff belohnt Entwickler, die engagiert bleiben. Das knowledge.md-System, das Plan Mode-Fragenbeantworten, die Möglichkeit einzugreifen, wenn du einen Agenten in die falsche Richtung gehen siehst — all dies gibt proportional zu der Aufmerksamkeit, die du darauf verwendest, Wert zurück. Wenn du nach einem vollständig handsfrei "löse es einfach"-Knopf suchst, wirst du frustriert sein, unabhängig davon, welches Tool du verwendest. Die besten Ergebnisse, die ich erzielte, kamen davon, CodeBuff wie ein fähiges Junior-Team zu behandeln, nicht wie einen Automaten.

Und eine Sache, über die ich immer wieder nachdenke: CodeBuffs architektonischer Vorteil ist heute real. Aber KI-Fähigkeiten bewegen sich schnell. Single-Model-Tools verbessern ihr Kontext-Management. Die Frage ist nicht, ob Multi-Agent-Koordination heute besser ist — das ist offensichtlich der Fall. Die Frage ist, ob dieser architektonische Vorteil sich vertieft oder schmälert, während die Modelle selbst sich verbessern. Meine Einschätzung ist, dass der Vorteil mindestens 12-18 Monate anhält. Danach ist die Entwicklung schwerer vorherzusagen.

Was sich wirklich in meinen Zahlen verändert hat

Zwei Wochen, echte Projekte, echte Messung:

Komplexes Full-Stack-Projekt (Dashboard): 12 Minuten mit CodeBuff versus eine 30-Minuten-Schätzung via Claude Code. Ausgabe: null Korrekturdurchläufe erforderlich. Typischer Claude Code-Durchlauf bei ähnlichem Umfang: 1-2 Korrekturdurchläufe.

Front-End-Komponentenarbeit (mittlere Komplexität): Die Geschwindigkeitslücke verkleinerte sich auf etwa 1,5x. Für unkomplizierte UI-Komponenten, bei denen der Umfang klar und das Muster etabliert ist, fügt der Multi-Agent-Overhead weniger relativen Wert hinzu. Das kostenlose Tier verarbeitet diese Kategorie gut.

Backend API-Arbeit mit komplexer Geschäftslogik: Plan Mode war der Standout. Die klärenden Fragen fingen zwei Anforderungsunklarheiten ab, über die ich nicht bewusst nachgedacht hatte — beide hätten sich beim Testen als Bugs gezeigt. Diese Zeitersparnis erscheint nicht in einem Geschwindigkeits-Benchmark, aber sie erschien in meiner tatsächlichen Projektzeitlinie.

Wo Claude Code noch gewinnt: Aufgaben, die von meinem bestehenden benutzerdefinierten Agenten-Setup und Integrationen abhängen. Schnelle Einzeldatei-Bearbeitungen, bei denen das Starten einer Multi-Agent-Sitzung eindeutig überdimensioniert ist. Aufgaben, bei denen ich sehr enge Kontrolle über die genaue Toolchain brauche.

Die Metrik, die ich dir empfehlen würde zu verfolgen, während du CodeBuff adoptierst: Korrekturdurchläufe pro Aufgabe. Zähle, wie oft du den Agenten ein zweites Mal prompts, um etwas in der ersten Ausgabe zu reparieren. Diese Zahl sollte bei komplexen Aufgaben sinken. Wenn nicht, verwendest du entweder das falsche Tier oder gibst dem knowledge.md-Kontext nicht genug Substanz. Der Agent ist nur so informiert wie das, was du ihm sagst.

Schnelle Gewinne erscheinen sofort bei der Art von Aufgabe, bei der Multi-Agent-Review Bugs im Implementierungsdurchlauf abfängt — du wirst diese in deiner ersten ernsthaften Sitzung sehen. Der langfristige Gewinn ist die Reduzierung von Nacharbeit bei komplexen Features, die sich über ein Projekt über Wochen hinweg aufaddiert.

Eine Aufgabe. Diese Woche.

Wähle das nervigste Feature in deinem Backlog — das eine, das immer wieder verschoben wird, weil der Umfang vage erscheint und die Grenzfälle sich wie Unbekannte anfühlen. Kein Spielzeugprojekt. Ein echtes Feature in einer echten Codebase, die dir wichtig ist.

Führe es zuerst durch Plan Mode. Beantworte die klärenden Fragen ehrlich, einschließlich der Fragen über Fehlermodi und Einschränkungen. Dann lass Max Plan gegen das Briefing implementieren.

Benchmark es nicht gegen Claude Code in der Theorie. Benchmark es gegen deinen tatsächlichen Claude Code-Workflow in deiner tatsächlichen Codebase. Miss die Implementierungszeit. Zähle Korrekturdurchläufe. Lies die Review-Zusammenfassung.

Dieser Test wird dir mehr sagen als jede Vergleichsrezension — einschließlich dieser. Der Multi-Agent-Ansatz für KI-Coding ist architektonisch solide, und CodeBuff ist das erste Tool, das ihn praktisch zugänglich gemacht hat. Ob es in deinen spezifischen Workflow passt, ist etwas, das nur echte Arbeit dir sagen kann.

Der einzige Weg, es zu wissen, ist es zu installieren und es herauszufinden.

npm install -g codebuff

codebuff

Zwölf Minuten. Das hat es mich gekostet, eine Antwort zu bekommen.

🤝 Lass Uns Zusammenarbeiten

Du möchtest KI-Systeme bauen, Workflows automatisieren oder deine technische Infrastruktur skalieren? Ich helfe gerne.

- 🔗 Fiverr (Maßanfertigungen & Integrationen): fiverr.com/s/EgxYmWD

- 🌐 Portfolio: mejba.me

- 🏢 Ramlit Limited (Enterprise-Lösungen): ramlit.com

- 🎨 ColorPark (Design & Branding): colorpark.io

- 🛡 xCyberSecurity (Sicherheitsdienste): xcybersecurity.io