Laravel in 2026: My Framework Is Now a Platform

Three years ago, a client asked what stack I was using. When I said "Laravel," he paused and replied, "Isn't that just the PHP thing?"

I didn't argue. Partly because I was mid-deployment. Partly because — honestly — at the time, I wasn't sure how to defend it beyond "it works and I can ship fast."

Fast forward to March 2026. That same client runs a multi-tenant SaaS platform I built in Laravel. It processes 40,000+ API calls a day, integrates with three AI providers, deploys automatically to AWS via GitHub Actions, and has never had an unplanned outage longer than seven minutes. When he asked me last month what stack I used, I told him: "A strategic platform."

He laughed, thinking I was joking.

I wasn't.

Here's the thing — there's a version of Laravel most developers are still using, and there's the version actually available to them in 2026. The gap between those two realities is where entire businesses are being built or missed. What I want to close in this post is that gap.

But before we get to the architecture decisions that actually matter, you need to understand why what got you here — solid CRUD apps, clean APIs, maybe some jobs and queues — might be precisely what's limiting you from building something truly durable.

There's one question I'll come back to at the very end that reframed how I think about Laravel entirely. Keep it in mind as you read.

The Honest State of Laravel in 2026 (No Marketing Spin)

Let me be direct about something: PHP spent years fighting an image problem. Laravel, by association, carried that weight. Developers coming from Node.js or Python would give me "the look" whenever I mentioned it. I'd quietly note my hourly rate and watch their expressions shift.

What's changed in 2026 isn't the language fundamentally. PHP 8.4 is genuinely fast — sub-millisecond response times on warm requests, JIT compilation that closes the gap with compiled languages in CPU-bound workloads, and a runtime deployed on hundreds of millions of servers worldwide. The tooling is mature. The community is massive.

What's changed is the ambition.

Laravel 11.x introduced a simplified application structure that cuts boilerplate significantly. Typed configuration replaced the old mixed array approach. Pest PHP 3.x became the preferred testing framework, and the integration is seamless. Octane — Laravel's high-performance application server built on Swoole or RoadRunner — runs Laravel in persistent memory, eliminating framework bootstrap overhead entirely. I've seen this take response times from 40ms down to under 8ms on read-heavy endpoints.

The developers I respect most right now aren't the ones asking "should I still use Laravel in 2026?" They're asking "what can I build with it that I couldn't have built two years ago?"

That's the right question. And the answer has five parts — each one building on the last.

AI Won't Write Your Features, But It Will Change How You Build Them

Everyone's talking about AI replacing developers. That's not what's happening. What IS happening is more interesting, and more immediately useful if you know where to look.

I started integrating AI tooling into my Laravel projects seriously about eighteen months ago. First it was simple — wrapping OpenAI's API to generate product descriptions for a client's e-commerce platform. Quick win. Saved their content team about twelve hours a week. Fine.

What surprised me was what happened when I started using AI inside the development workflow itself, not just as a feature.

Take test generation. A client's Laravel application had roughly 200 database models and almost zero test coverage. Adding coverage manually would have taken weeks. Instead, I used a Claude claude-sonnet-4-6 integration to analyze each model's relationships, validation rules, and common query patterns — then generate Pest PHP test scaffolding. Not finished tests. Scaffolding. The AI gave me the structure and identified edge cases I would have missed. I filled in the domain-specific logic. We went from 4% coverage to 67% in nine days.

Here's what I got wrong at first: I tried using AI as a replacement for thinking. I'd dump a problem into the API and expect a complete solution. That approach consistently produced mediocre code requiring more cleanup than if I'd written it myself.

The mental model that actually works is treating AI as a senior developer who types very fast and has zero context about your specific business. You supply the context, the constraints, the "why." The AI supplies the boilerplate, the edge case identification, the draft implementation. You review, refine, own it.

In practice, for a Laravel application this means wrapping AI calls cleanly:

// Using Laravel's HTTP client for clean, testable AI integration

use Illuminate\Support\Facades\Http;

class ProductDescriptionService

{

public function generate(Product $product): string

{

$response = Http::withToken(config('services.anthropic.key'))

->timeout(30)

->post('https://api.anthropic.com/v1/messages', [

'model' => 'claude-opus-4-6',

'max_tokens' => 500,

'messages' => [

[

'role' => 'user',

'content' => "Write a 150-word product description for: {$product->name}.

Category: {$product->category}.

Key attributes: {$product->attributes->implode(', ')}."

]

]

]);

return $response->json('content.0.text');

}

}

This is clean, testable, and runs in a Laravel job that respects rate limits. The critical architectural decision: wrap AI calls in service classes that queue through Laravel Horizon. A flaky API response at 3 AM shouldn't take down user-facing features.

The problem I kept seeing — and had to solve for a client — is AI costs spiraling out of control. Teams rack up $4,000+ in API bills in a month because they put AI calls in synchronous request handlers without caching or throttling. The fix is Laravel's cache layer combined with model selection strategy: lighter models for draft generation, expensive models only for final output. Cache the results aggressively for content that doesn't need to be unique on every call.

That's the AI story. But it only works if your infrastructure can support it at scale. Which brings us to the part most developers underestimate until something breaks in production.

Why "Cloud-Native" Isn't a Buzzword — It's a Failure Prevention Strategy

I want to be honest here: I'm not anti-monolith. I've defended the Laravel monolith in technical discussions more times than I can count. For teams of one to five developers, a well-structured monolith is often the right choice.

But there's a specific failure pattern I keep seeing. Developers build a Laravel monolith, it works great up to around 10,000 monthly active users, and then something unexpected hits — a viral launch, a business pivot, a feature requiring real-time collaboration. Suddenly the monolith is a constraint, and the migration cost is enormous.

The developers who avoid this aren't microservices zealots. They make specific architectural decisions early that keep their options open.

Here's what that looks like in practice.

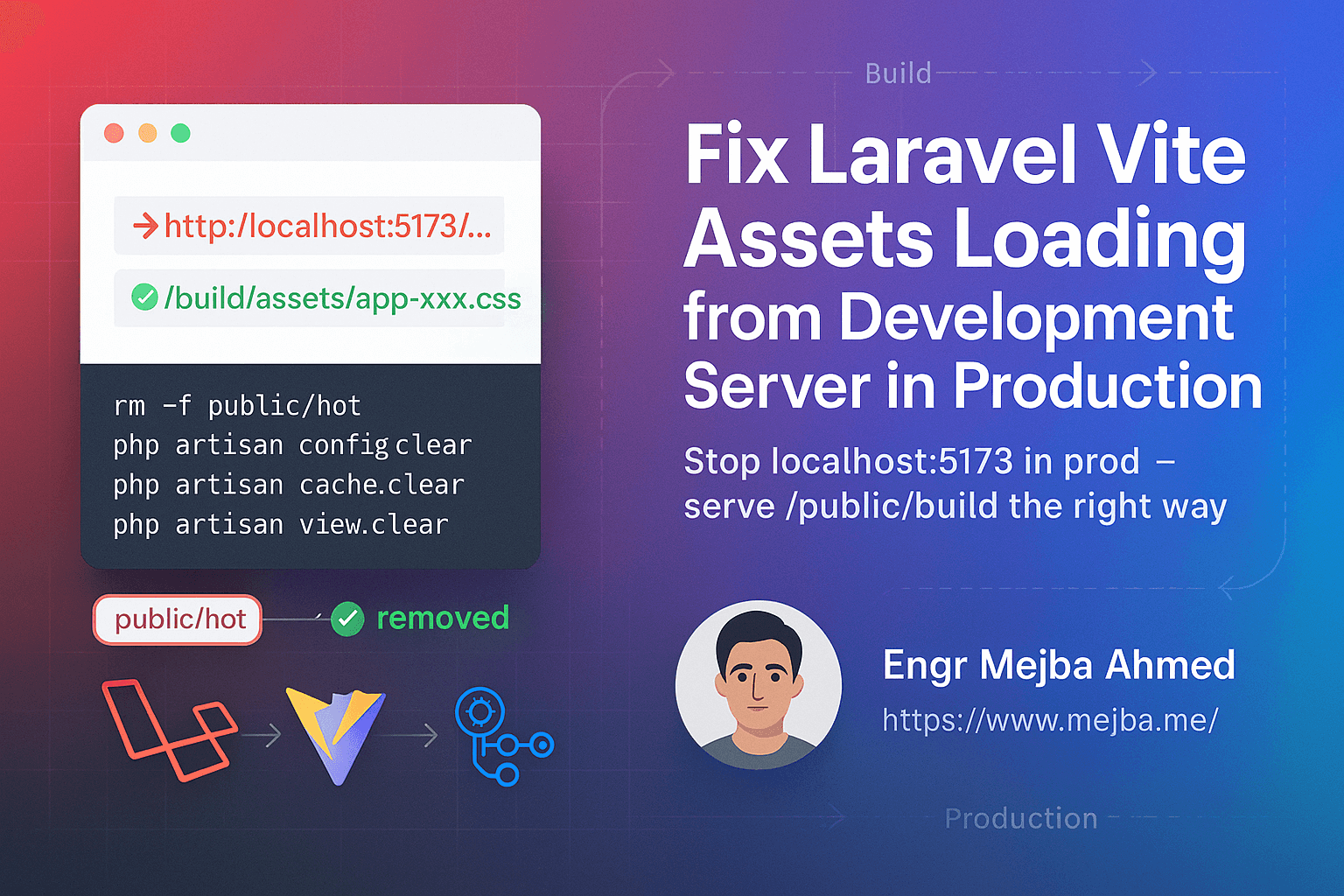

Containerization done right, not just done. Most tutorials show a basic Dockerfile. What they don't show is a production-ready multi-stage build that separates dev and prod dependencies, caches composer layers properly, and produces an image under 150MB. Image size directly impacts cold start time on ECS and container boot time on Kubernetes.

# Stage 1: Composer dependencies

FROM composer:2.7 AS composer-stage

WORKDIR /app

COPY composer.json composer.lock ./

RUN composer install --no-dev --no-scripts --optimize-autoloader --prefer-dist

# Stage 2: Production image

FROM php:8.4-fpm-alpine AS production

WORKDIR /var/www/html

# Install only what production needs

RUN docker-php-ext-install pdo_mysql opcache

RUN pecl install redis && docker-php-ext-enable redis

# Copy optimized vendor from composer stage

COPY --from=composer-stage /app/vendor ./vendor

COPY . .

# Cache Laravel artifacts at build time, not runtime

RUN php artisan config:cache && \

php artisan route:cache && \

php artisan view:cache

EXPOSE 9000

CMD ["php-fpm"]

This build process cut deployment time for one project from 4.5 minutes to 1.8 minutes. Small in isolation. Significant when you're deploying five times a day.

Laravel queues as the architectural seam. An underrated decision: if you might need to extract a feature into its own service later, build it as a queued job first. Jobs have clean input/output contracts. They're easy to move to a dedicated worker. They're observable with Horizon. When you eventually need to extract a feature — maybe because image processing is consuming 80% of your CPU — you have clean seams to cut along.

Serverless for burst workloads, not everything. I've been using Laravel Vapor for specific client projects, and the model holds up well for event-driven workloads: end-of-month report generation, image processing, webhook handling. You don't pay for idle time, and you get automatic scaling with zero infrastructure management.

The catch nobody mentions: cold starts. A Laravel app on Lambda can take 800ms–1.2s to cold start without optimization. Octane on persistent compute outperforms Lambda every time for latency-sensitive endpoints. This is a genuine trade-off — know which you need before you commit to either.

When "Refresh the Page" Becomes the Wrong Answer

At this point you've got AI integration and cloud architecture mentally mapped. Good. But here's the problem with every enterprise application I've inherited as a consultant: they built the backend right and forgot that users sit in front of a screen expecting things to happen without clicking refresh.

Real-time isn't about chat apps. It's about order statuses updating without page reloads. Live dashboards that don't require a JavaScript polling hack every two seconds. Collaborative editing where two users don't overwrite each other's changes.

Laravel's answer in 2026 is Reverb — the official WebSocket server released with Laravel 11. Before Reverb, you needed a managed service like Pusher (cost and latency) or a self-managed Soketi instance (ops burden). Reverb runs alongside your Laravel app, handles WebSocket connections natively, and integrates directly with Laravel's broadcasting system.

Setup is genuinely clean:

# Install and configure Reverb

php artisan install:broadcasting

# Start the WebSocket server

php artisan reverb:start --host=0.0.0.0 --port=8080

On the backend, broadcasting is three lines:

class OrderStatusUpdated implements ShouldBroadcast

{

public function __construct(public Order $order) {}

public function broadcastOn(): Channel

{

return new PrivateChannel('orders.' . $this->order->id);

}

}

In your Vue 3 + Inertia.js frontend:

import Echo from 'laravel-echo'

import Pusher from 'pusher-js'

window.Pusher = Pusher

const echo = new Echo({

broadcaster: 'reverb',

key: import.meta.env.VITE_REVERB_APP_KEY,

wsHost: import.meta.env.VITE_REVERB_HOST,

wsPort: import.meta.env.VITE_REVERB_PORT,

forceTLS: false,

enabledTransports: ['ws', 'wss'],

})

// Listen for real-time order updates

echo.private(`orders.${orderId}`)

.listen('OrderStatusUpdated', (event) => {

order.status = event.order.status

order.updatedAt = event.order.updated_at

})

The part that trips people up: private channel authentication. Laravel handles this automatically through the /broadcasting/auth route, but you need to define your channel authorization in routes/channels.php. Miss this and you'll spend an afternoon debugging 403 errors in WebSocket handshakes. Ask me how I know.

If you've made it this far, you already have a mental model for AI integration, cloud architecture, and real-time features. Most posts stop at the architecture overview. The next section is where the real operational discipline lives — and where the difference between a system that scales and one that collapses under load becomes visible.

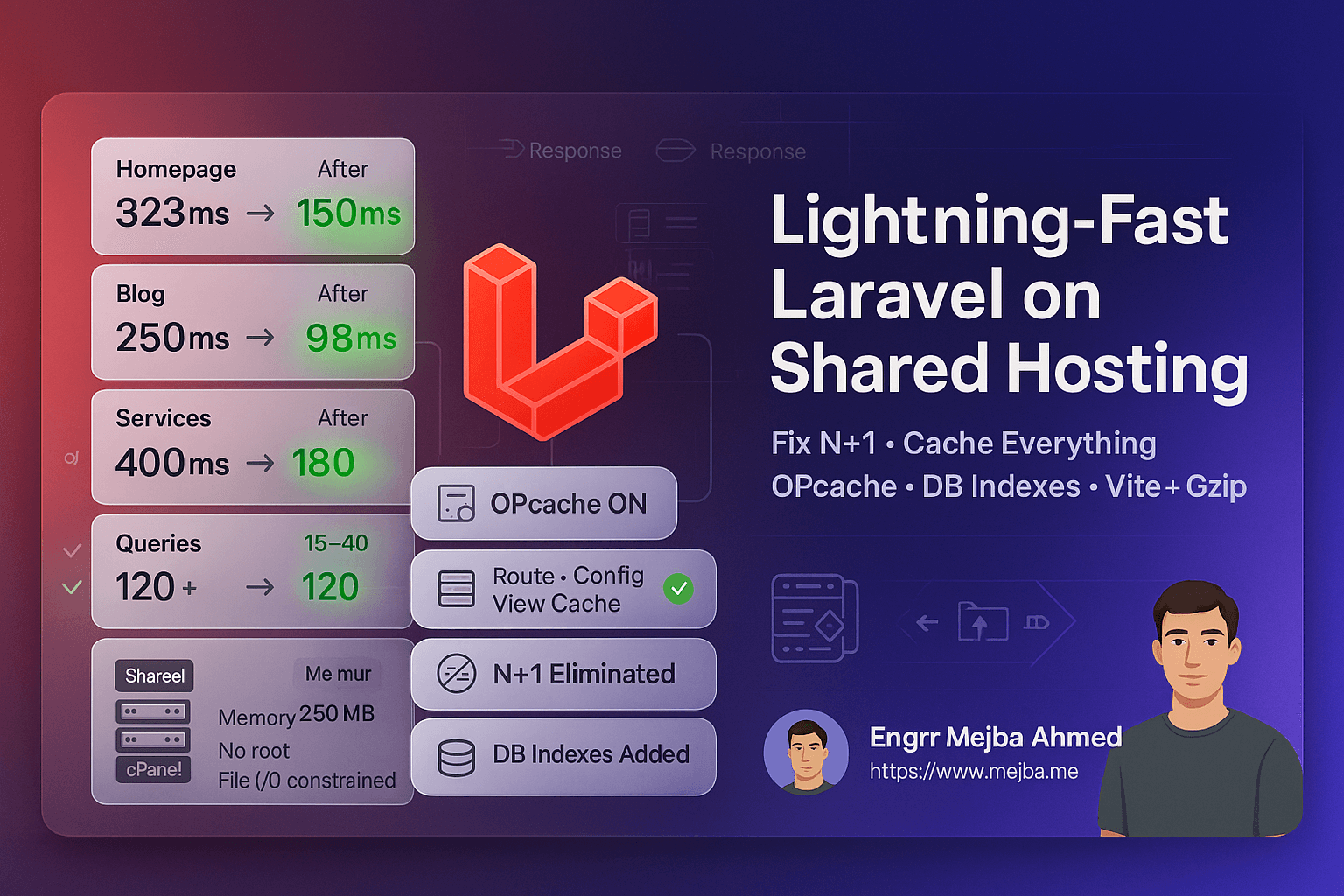

The Performance Problems You Don't See Until It's Too Late

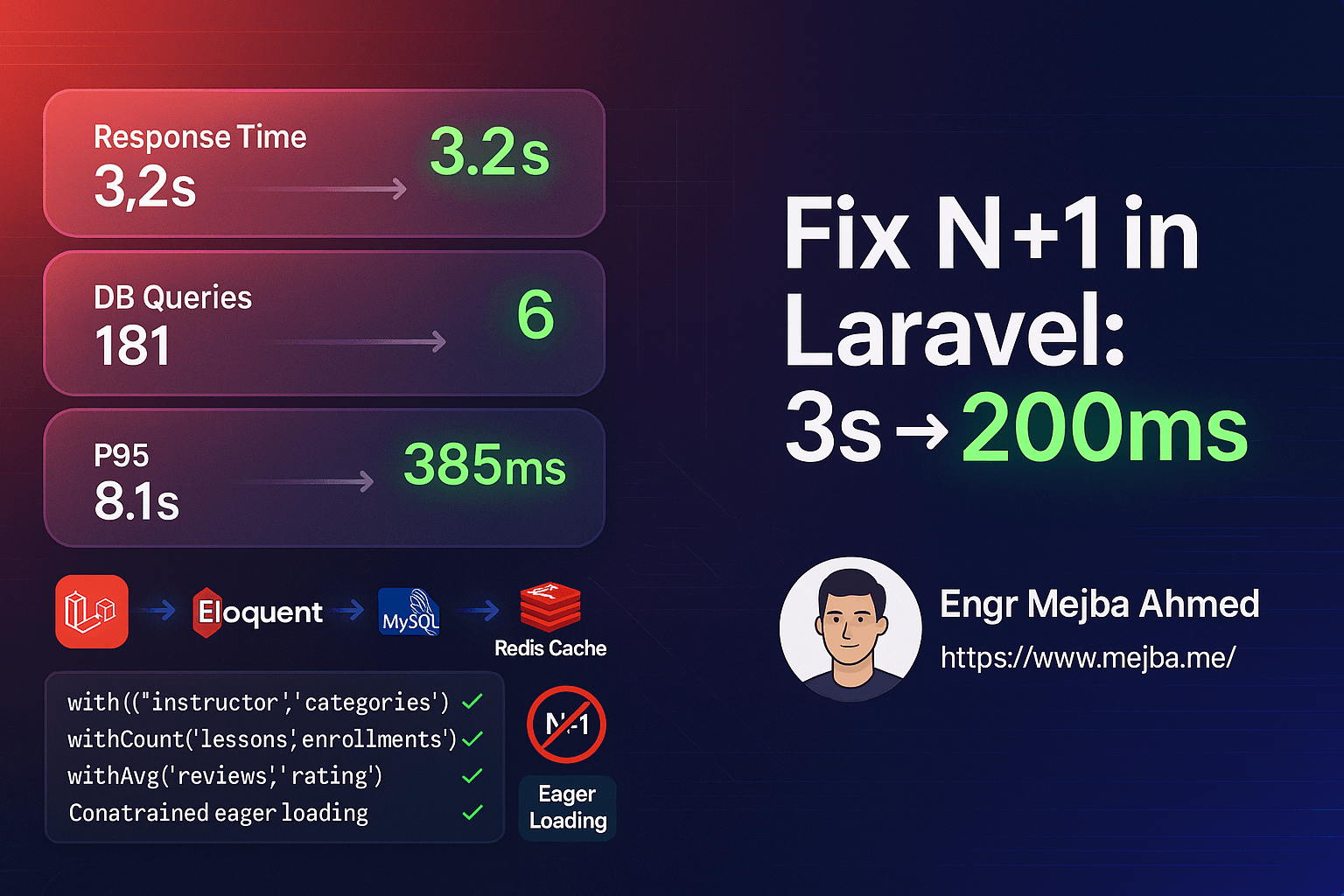

A confession: I once deployed a Laravel application that worked perfectly in staging and fell over in production within 48 hours. Load was higher than expected. Unoptimized queries became obvious. The N+1 problem I'd dismissed as "something to fix later" turned into a P0 incident at 2 AM.

That experience changed how I think about performance. You don't add it at the end. You build it in as a discipline from the start.

Three areas where Laravel applications most commonly fail under load:

Unoptimized Eloquent queries. The ORM is excellent. It's also easy to abuse. Every time you write $post->author->name inside a loop without eager loading, you make a separate database query per iteration. On a list of 50 posts, that's 51 queries instead of 2. Laravel Telescope makes this painfully visible in development — install it, run through your critical paths, and look at the query count. Anything over 20 queries for a single page load deserves investigation.

// This creates an N+1 problem

$posts = Post::all();

foreach ($posts as $post) {

echo $post->author->name; // 1 query per post = N+1 total

}

// This doesn't — eager load everything you know you need

$posts = Post::with(['author', 'tags', 'category'])->paginate(25);

foreach ($posts as $post) {

echo $post->author->name; // already in memory

}

Careless Redis usage. Redis is not magic. I've inherited applications where developers cached everything "just in case" and ended up with cache keys expiring every 30 seconds, cache stampedes hitting the database harder than no cache at all, and memory bloat causing Redis to evict active sessions. The right approach: cache the expensive stuff — aggregate queries, third-party API responses, computed values — with intentional TTLs. Use Cache::remember() for automatic cache-or-compute patterns. Run separate Redis instances for sessions, cache, and queues. They have different eviction policies and shouldn't compete for the same memory pool.

Queue structure that wasn't designed, just accumulated. Laravel Horizon is powerful, but it only helps you if you structured your queues intentionally. High-priority jobs — email confirmations, payment processing, authentication events — should run on separate queues from low-priority work like weekly digest emails or analytics aggregation. Mixing them means a backlog of report generation jobs can delay password reset emails. That's a support ticket you don't want.

// Dispatch critical work to its own queue

ProcessPayment::dispatch($order)->onQueue('critical');

// Background analytics can wait

RecordUserActivity::dispatch($event)->onQueue('low');

// config/horizon.php — supervisor watching queues in priority order

'supervisor-1' => [

'connection' => 'redis',

'queue' => ['critical', 'high', 'default', 'low'],

'balance' => 'auto',

'minProcesses' => 1,

'maxProcesses' => 10,

],

Alright. You now have the full picture — AI integration, cloud architecture, real-time features, and performance discipline. The last technical layer is the one that determines whether enterprises trust your application with their data. And this is where Laravel surprised me the most.

Laravel Isn't a Startup Framework Anymore — And That Changes Your Responsibility

The engineering leads I talk to who are evaluating Laravel for enterprise projects all ask the same question: "We love the developer experience, but can it handle our compliance requirements?"

The answer in 2026 is yes — but it requires deliberate choices, not just good defaults.

Laravel ships with CSRF protection, automatic SQL injection prevention through the query builder, XSS protection via Blade's automatic HTML encoding, and bcrypt/Argon2 password hashing. These defaults are genuinely good. But defaults aren't enough for regulated industries.

For a healthcare client last year, I implemented field-level encryption for all PII data at rest using Laravel's built-in encryption helpers. Every Eloquent model storing patient-adjacent data used an encrypted cast:

// Models automatically encrypt/decrypt sensitive fields

protected $casts = [

'date_of_birth' => 'encrypted',

'ssn_last_four' => 'encrypted',

'diagnosis_notes' => 'encrypted',

'contact_phone' => 'encrypted',

];

Combined with Laravel Sanctum for API token authentication, activity logging via Spatie's Laravel Activity Log (v4.x), proper database-level role separation, and audit trails on every sensitive operation, the system passed a HIPAA compliance review. Not because Laravel made compliance automatic — because the framework gave us the primitives to implement compliance correctly without fighting the tool.

Role-based authorization at scale deserves its own attention. Spatie's laravel-permission package (v6.x) is the de facto standard, and the integration with Eloquent is clean. Where teams trip up is in designing the permission structure upfront. A common mistake: creating individual permissions for every granular action. Multiply that across 50 features and you have a permission matrix no one can maintain.

My rule: start with roles — admin, manager, member, viewer. Add individual permissions only when a role-level distinction doesn't work. You can always add granularity later. Removing it from a production system with thousands of users is painful.

// Clean role-based gates in policy classes

class ReportPolicy

{

public function view(User $user, Report $report): bool

{

return $user->hasAnyRole(['admin', 'manager'])

|| ($user->hasRole('member') && $report->team_id === $user->team_id);

}

public function export(User $user): bool

{

return $user->hasRole(['admin', 'manager']);

}

}

The integration story — Laravel as the backend for mobile apps, IoT devices, and AI-driven platforms — is genuinely compelling right now. I've shipped REST APIs consumed by iOS apps, WebSocket servers driving real-time industrial dashboards, and AI orchestration layers where Laravel queues coordinate multi-step Claude workflows. The framework handles all of it. The constraint is always the architect's decisions, not the tool's capability.

What I Got Wrong About Laravel — The Honest Version

I used to argue that Laravel was the wrong choice for high-throughput, low-latency microservices. My reasoning: PHP's share-nothing architecture meant each request bootstrapped the entire framework, and that overhead was prohibitive at scale.

I was partially right and mostly wrong.

Octane changed the calculus entirely. With RoadRunner or Swoole, Laravel runs in persistent processes with no per-request bootstrap overhead. I've benchmarked Octane-powered endpoints at 12,000+ requests per second on modest hardware — 4 CPU cores, 8GB RAM. That's competitive with many Go microservices I've seen in production, running code my team already knows how to write and debug.

The trade-off I initially missed: Octane requires careful attention to state. Global variables, singleton services storing per-request data, static properties — all of these persist between requests in an Octane environment. That's a bug factory if you're not deliberate. Every service touching per-request state needs explicit reset between requests using Octane's flush mechanism. We spent a full sprint tracking down a subtle bug caused by a cached user object persisting across requests. Not fun.

The other thing I underestimated: how much the ecosystem matters long-term. Laravel's package ecosystem — Spatie's collection alone, plus Cashier, Passport, Sanctum, Telescope, Horizon, Reverb, Octane, Vapor — means common problems have solutions you don't need to build. That's developer time redirected from infrastructure to product. Over two years on a project, the compounding value is real and measurable.

Here's an unpopular take worth having: not every project needs a microservices architecture. Companies pitching "event-driven microservices" on day one are often the same ones three years later paying four engineers to maintain a distributed system a two-person team could have handled with a well-structured monolith and intentional queue configuration. Architecture should match team size, traffic patterns, and organizational maturity — not trend cycles.

What This Actually Looks Like When It Works

Concrete numbers from a project I worked on — not hypothetical benchmarks.

A multi-tenant SaaS platform, rebuilt in Laravel 11 + Octane + Reverb, serving 340 business accounts across three countries. Before the rebuild, the legacy system averaged 2.1 seconds page load, experienced weekly downtime during peak usage, and the team spent roughly 60% of sprint time on bug fixes rather than features.

After six months on the new stack:

- Average response time: 180ms on API endpoints, 340ms on full Inertia.js page loads

- Uptime: Zero unplanned outages in the four months post-launch

- Feature velocity: 3x improvement measured in story points per sprint

- Infrastructure cost: 23% reduction despite 40% traffic growth — achieved by right-sizing queue workers and using Vapor for batch processing instead of always-on compute

The honest version: the first three months were rough. Data migration is always harder than anyone forecasts. The Octane state issues cost a full sprint to find and fix. The permission system got rewritten twice because the initial design didn't account for multi-tenant context correctly.

Quick wins vs. long-term gains: Octane is a one-hour configuration change with immediate impact. AI integration patterns take a week to get right but compound over months. Compliance architecture takes longer — but unlocks enterprise contract sizes and the trust that comes with them.

What to actually measure: response time at the 95th percentile (not average — averages hide outliers), queue throughput during peak hours, cache hit rate per endpoint (target above 80% for read-heavy routes), and error rate by queue priority. Laravel Telescope and Horizon give visibility into all of this. Use them from day one.

The Challenge I'm Leaving You With

Here's the one thing I want you to do before your next sprint.

Open your Laravel application. Run php artisan telescope:install if you haven't yet. Walk through three of your most-used routes and look — genuinely look — at the query count per request. Don't fix anything yet. Just observe.

Fewer than five queries and cache hit rate above 70%? You're in solid shape. Seeing 40+ queries and zero cache hits? You have three months of performance work ahead of you that will pay dividends every single day after that work is done.

The developers who treat Laravel as a strategic platform — not just a framework to ship features — are the ones who audit first, design systems before building features, and make architectural decisions that age well. The ones who treat it as "just the PHP thing" keep rebuilding the same applications every three years when the tech debt compounds past the breaking point.

The intersection of frameworks like Laravel, AI automation, and cloud-native design is going to look obvious in retrospect a decade from now. The engineers figuring out that intersection practically — not theoretically — are the ones getting the interesting projects and the client relationships that compound over years.

That question I promised to come back to: what does your Laravel application reveal about the architect behind it?

Take a look. The answer is already in there.

🤝 Let's Work Together

Looking to build AI systems, automate workflows, or scale your tech infrastructure? I'd love to help.

- 🔗 Fiverr (custom builds & integrations): fiverr.com/s/EgxYmWD

- 🌐 Portfolio: mejba.me

- 🏢 Ramlit Limited (enterprise solutions): ramlit.com

- 🎨 ColorPark (design & branding): colorpark.io

- 🛡 xCyberSecurity (security services): xcybersecurity.io